Difference between revisions of "Datasource Adapters"

| (141 intermediate revisions by 5 users not shown) | |||

| Line 40: | Line 40: | ||

_fields="String account, Integer qty, Double px" | _fields="String account, Integer qty, Double px" | ||

_pattern="account,qty,px=Account (.*) has (.*) shares at \\$(.*) px" | _pattern="account,qty,px=Account (.*) has (.*) shares at \\$(.*) px" | ||

| − | EXECUTE SELECT * FROM | + | EXECUTE SELECT * FROM file |

</syntaxhighlight> | </syntaxhighlight> | ||

| Line 51: | Line 51: | ||

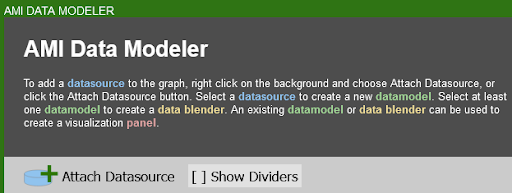

1. Open the '''datamodeler''' (In Developer Mode -> Menu Bar -> Dashboard -> Datamodel) | 1. Open the '''datamodeler''' (In Developer Mode -> Menu Bar -> Dashboard -> Datamodel) | ||

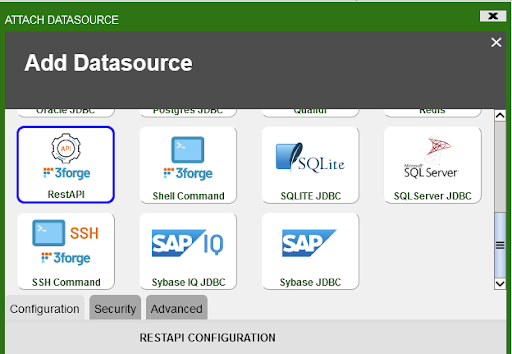

| − | 2. Choose the "''' | + | 2. Choose the "'''Attach Datasource'''" button |

3. Choose '''Flat File Reader''' | 3. Choose '''Flat File Reader''' | ||

| Line 349: | Line 349: | ||

<span style="font-family: Courier New; color: blue;">_filterIn="Data(.*)"</span> (ignore any lines that don't start with ''Data,'' and only consider the text after the word ''Data'' for processing) | <span style="font-family: Courier New; color: blue;">_filterIn="Data(.*)"</span> (ignore any lines that don't start with ''Data,'' and only consider the text after the word ''Data'' for processing) | ||

| + | |||

| + | |||

| + | === Python Adapter Guide === | ||

| + | 1. Introduction | ||

| + | The python adapter is a library which provides access to both the console port as well as real-time port on python scripts via sockets.<br> | ||

| + | The adapter is meant to be integrated with external python libraries and does not contain a __main__ entry point. To use the simple python demo, switch your branch to example and run demo.py.<br> | ||

| + | The adapter has a few default arguments which should work with AMI out of the box but can be customized depending on the input arguments. To view the full set of arguments, run the program with the --help argument.<br> | ||

| + | |||

| + | = MongoDB adapter = | ||

| + | 1. Setup <br> | ||

| + | (a). Go to your lib directory (located at '''./amione/lib/''') and take the ami_adapter_mongo.9593.dev.obv.tar.gz and copy the contents into the lib directory of your currently installed AMI. Make sure that you unzip the file package into multiple files ending with .jar.<br> | ||

| + | [[File:Mongo Adapter1.png]]<br> | ||

| + | |||

| + | (b). Go into your config directory (located at '''ami\amione\config''') and edit or make a '''local.properties'''<br> | ||

| + | Search for '''ami.datasource.plugins''', add the Mongo Plugin to the list of datasource plugins:<br> | ||

| + | <pre>ami.datasource.plugins=$${ami.datasource.plugins},com.f1.ami.plugins.mongo.AmiMongoDatasourcePlugin</pre> | ||

| + | Here is an example of what it might look like:<br> | ||

| + | [[File:Mongo Adapter2.png]] <br> | ||

| + | Note: '''$${ami.datasource.plugins}''' references the existing plugin list. Do not put a space before or after the comma.<br> | ||

| + | |||

| + | (c). Restart AMI<br> | ||

| + | (d). Go to '''Dashboard->Data modeller''' and select '''Attach Datasource'''. <br> | ||

| + | [[File:Mongo Adapter3.png]]<br> | ||

| + | (e). Select MongoDB as the Datasource. Give your Datasource a name and configure the URL. | ||

| + | The URL should take the following format:<br> | ||

| + | <pre>URL: server_address:port_number/Your_Database_Name </pre> | ||

| + | |||

| + | In the demonstration below, the URL is: '''localhost:27017/test'''. The mongoDB by default listens to the port 27017 and we are going to the ''test'' database.<br> | ||

| + | [[File:Mongo Adapter4.png]]<br> | ||

| + | |||

| + | 2. Send queries to MongoDB in AMI <br> | ||

| + | The AMI MongoDB adapter allows you to query a MongoDB datasource and output sql tables. | ||

| + | This section will demonstrate how to query MongoDB in AMI. The general syntax for querying MongoDB is:<br> | ||

| + | |||

| + | <syntaxhighlight lang="sql" > CREATE TABLE Your_Table_Name AS USE EXECUTE <Your_MongoDB_query> </syntaxhighlight> <br> | ||

| + | Note that whatever comes after the keyword '''EXECUTE''' is the MongoDB query, which should follow the MongoDB query syntax. | ||

| + | |||

| + | (a). Create a AMI SQL table from a MongoDB collection <br> | ||

| + | <span class="nowrap"> </span> (i). MongoDB collection example1<br> | ||

| + | In the MongoDB shell, let’s create a collection called “customer”, and insert some rows into it.<br> | ||

| + | <syntaxhighlight lang="sql" >db.createCollection("zipcodes"); | ||

| + | db.zipcodes.insert({id:01001,city:'AGAWAM', loc:[-72.622,42.070],pop:15338, state:'MA'}); | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | <span class="nowrap"> </span> (ii).Query this table in AMI<br> | ||

| + | <syntaxhighlight lang="sql" >CREATE TABLE zips AS USE EXECUTE SELECT (String)_id,(String)city,(String)loc,(Integer)pop,(String)state FROM zipcodes WHERE ${WHERE};</syntaxhighlight> | ||

| + | |||

| + | [[File:Mongo Adapter5.png]]<br> | ||

| + | |||

| + | (b).Create a AMI SQL table from a MongoDB collection with nested columns <br> | ||

| + | <span class="nowrap"> </span> (i). Inside the MongoDB shell, we can create a collection named "myAccounts" and insert one row into the collection.<br> | ||

| + | <syntaxhighlight lang="sql"> | ||

| + | db.createCollection("myAccounts"); | ||

| + | db.myAccounts.insert({id:1,name:'John Doe', address:{city:'New York City', state:'New York'}});</syntaxhighlight> | ||

| + | <span class="nowrap"> </span> (ii).Query this table in AMI <br> | ||

| + | <syntaxhighlight lang="sql"> CREATE TABLE account AS USE EXECUTE SELECT (String)_id,(String)name,(String)address.city, (String)address.state FROM myAccounts WHERE ${WHERE};</syntaxhighlight> | ||

| + | |||

| + | (c). Create a AMI SQL table from a MongoDB collection using EVAL methods <br> | ||

| + | <span class="nowrap"> </span> (i). '''Find''' <br> | ||

| + | |||

| + | Let’s use the myAccounts MongoDB collection that we created before and insert some rows into it. Inside MongoDB shell:<br> | ||

| + | <syntaxhighlight lang="sql"> | ||

| + | db.myAccounts.insert({id:1,name:'John Doe', address:{city:'New York City', state:'NY'}}); | ||

| + | db.myAccounts.insert({id:2,name:'Jane Doe', address:{city:'New Orleans', state:'LA'}}); | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | If we want to create a sql table from MongoDB that finds all rows whose address state is '''LA''', we can enter the following command in AMI script and hits '''test''':<br> | ||

| + | <syntaxhighlight lang="sql"> | ||

| + | CREATE TABLE account AS USE EXECUTE SELECT (String)_id,(String)name,(String)address.city, (String)address.state FROM EVAL db.myAccounts.find({'address.state':'LA'}) WHERE ${WHERE}; | ||

| + | </syntaxhighlight> | ||

| + | [[File:Mongo Adapter find.png]] <br> | ||

| + | |||

| + | <span class="nowrap"> </span> (ii). '''Limit''' <br> | ||

| + | <syntaxhighlight lang="sql"> | ||

| + | CREATE TABLE myAccounts AS USE EXECUTE SELECT (String)_id,(Double)id,(String)name,(String)address FROM EVAL db.myAccounts.find().limit(1) WHERE ${WHERE}; | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | [[File:Mongo Adapter limit.png]] | ||

| + | |||

| + | <span class="nowrap"> </span> (iii). '''Skip''' <br> | ||

| + | <syntaxhighlight lang="sql"> | ||

| + | CREATE TABLE myAccounts AS USE EXECUTE SELECT (String)_id,(Double)id,(String)name,(String)address FROM EVAL db.myAccounts.find().skip(1) WHERE ${WHERE}; | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | [[File:Mongo Adapter skip.png]] | ||

| + | |||

| + | <span class="nowrap"> </span> (iv). '''Sort''' <br> | ||

| + | <syntaxhighlight lang="sql"> | ||

| + | CREATE TABLE myAccounts AS USE EXECUTE SELECT (String)_id,(Double)id,(String)name,(String)address FROM EVAL db.myAccounts.find().sort({name:1}) WHERE ${WHERE}; | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | [[File:Mongo Adapter sort.png]] | ||

| + | |||

| + | <span class="nowrap"> </span> (v). '''Projection''' <br> | ||

| + | <syntaxhighlight lang="sql"> | ||

| + | CREATE TABLE myAccounts AS USE EXECUTE SELECT (String)_id,(Double)id,(String)name,(String)address FROM EVAL db.myAccounts.find().projection({id:0}) WHERE ${WHERE}; | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | [[File:Mongo Adapter projection.png]] | ||

= Shell Command Reader = | = Shell Command Reader = | ||

| Line 766: | Line 865: | ||

<span style="font-family: Courier New; color: blue;">_filterIn="Data(.*)"</span> (ignore any lines that don't start with ''Data,'' and only consider the text after the word ''Data'' for processing) | <span style="font-family: Courier New; color: blue;">_filterIn="Data(.*)"</span> (ignore any lines that don't start with ''Data,'' and only consider the text after the word ''Data'' for processing) | ||

| + | |||

= SSH Adapter = | = SSH Adapter = | ||

| Line 1,192: | Line 1,292: | ||

[[File:1.5.png|thumb]] | [[File:1.5.png|thumb]] | ||

| − | = REST | + | = REST Adapter = |

== Overview == | == Overview == | ||

The AMI REST adaptor aims to establish a bridge between the AMI and the RESTful API so that we can interact with RESTful API from within AMI. Here are some basic instructions on how to add a REST Api Adapter and use it. | The AMI REST adaptor aims to establish a bridge between the AMI and the RESTful API so that we can interact with RESTful API from within AMI. Here are some basic instructions on how to add a REST Api Adapter and use it. | ||

| Line 1,215: | Line 1,315: | ||

<strong>URL</strong>: The base url of the target api. (Alternatively you can use the direct url to the rest api, see the directive _urlExtensions for more information) <br> | <strong>URL</strong>: The base url of the target api. (Alternatively you can use the direct url to the rest api, see the directive _urlExtensions for more information) <br> | ||

<strong>Username</strong>: (Username for basic authentication)<br> | <strong>Username</strong>: (Username for basic authentication)<br> | ||

| − | <strong>Password</strong>: (Password for basic authentication) | + | <strong>Password</strong>: (Password for basic authentication)<br> |

</code> | </code> | ||

| − | + | ||

== How to use the 3forge RestAPI Adapter == | == How to use the 3forge RestAPI Adapter == | ||

| Line 1,262: | Line 1,362: | ||

api = Api(app) | api = Api(app) | ||

</syntaxhighlight> | </syntaxhighlight> | ||

| + | |||

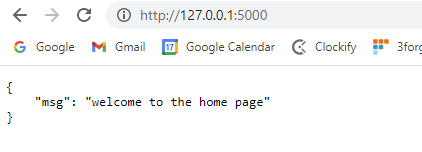

| + | ==== Create the class Home and set up home page ==== | ||

| + | |||

| + | <syntaxhighlight lang="python"> | ||

| + | class Home(Resource): | ||

| + | def __init__(self): | ||

| + | pass | ||

| + | def get(self): | ||

| + | return { | ||

| + | "msg": "welcome to the home page" | ||

| + | } | ||

| + | api.add_resource(Home, '/') | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | The add_resoure function will add Home class to the root directory of the url and display the message “Welcome to the home page”. | ||

| + | |||

| + | |||

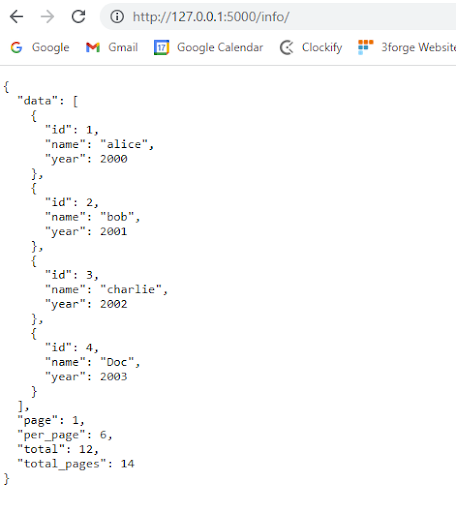

| + | ==== Add endpoints onto the root url ==== | ||

| + | |||

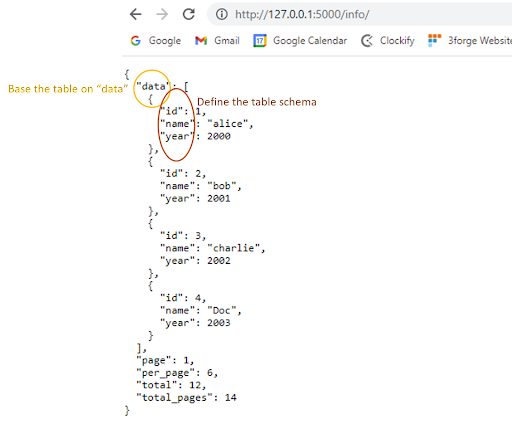

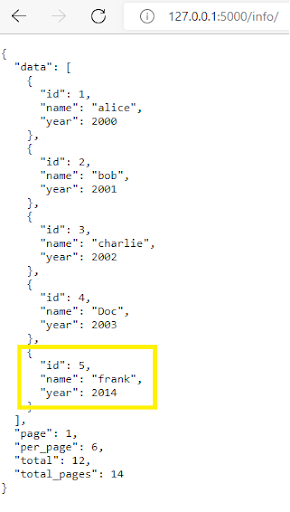

| + | The following snippet code will display all the information from variable “all” in JSON format once we hit the url: 127.0.0.1:5000/info/ | ||

| + | |||

| + | <syntaxhighlight lang="python"> | ||

| + | #show general info | ||

| + | #url:http://127.0.0.1:5000/info/ | ||

| + | all = { | ||

| + | "page": 1, | ||

| + | "per_page": 6, | ||

| + | "total": 12, | ||

| + | "total_pages":14, | ||

| + | "data": [ | ||

| + | { | ||

| + | "id": 1, | ||

| + | "name": "alice", | ||

| + | "year": 2000, | ||

| + | }, | ||

| + | { | ||

| + | "id": 2, | ||

| + | "name": "bob", | ||

| + | "year": 2001, | ||

| + | }, | ||

| + | { | ||

| + | "id": 3, | ||

| + | "name": "charlie", | ||

| + | "year": 2002, | ||

| + | }, | ||

| + | { | ||

| + | "id": 4, | ||

| + | "name": "Doc", | ||

| + | "year": 2003, | ||

| + | } | ||

| + | ] | ||

| + | } | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | ==== Define GET,POST,PUT and DELETE methods for http requests ==== | ||

| + | * GET method | ||

| + | <syntaxhighlight lang="python"> | ||

| + | @app.route('/info/', methods=['GET']) | ||

| + | def show_info(): | ||

| + | return jsonify(all) | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | * POST method | ||

| + | <syntaxhighlight lang="python"> | ||

| + | @app.route('/info/', methods=['POST']) | ||

| + | def add_datainfo(): | ||

| + | newdata = {'id':request.json['id'],'name':request.json['name'],'year':request.json['year']} | ||

| + | all['data'].append(newdata) | ||

| + | return jsonify(all) | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | * DELETE method | ||

| + | <syntaxhighlight lang="python"> | ||

| + | @app.route('/info/', methods=['DELETE']) | ||

| + | def del_datainfo(): | ||

| + | delId = request.json['id'] | ||

| + | for i,q in enumerate(all["data"]): | ||

| + | if delId in q.values(): | ||

| + | popidx = i | ||

| + | all['data'].pop(popidx) | ||

| + | return jsonify(all) | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | * PUT method | ||

| + | <syntaxhighlight lang="python"> | ||

| + | @app.route('/info/', methods=['PUT']) | ||

| + | def put_datainfo(): | ||

| + | updateData = {'id':request.json['id'],'name':request.json['name'],'year':request.json['year']} | ||

| + | for i,q in enumerate(all["data"]): | ||

| + | if request.json['id'] in q.values(): | ||

| + | all['data'][i] = updateData | ||

| + | return jsonify(all) | ||

| + | return jsonify({"msg":"No such id!!"}) | ||

| + | </syntaxhighlight> | ||

| + | |||

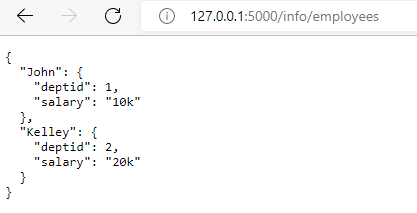

| + | ==== Add one more endpoint employees using query parameters ==== | ||

| + | * Define employees information to be displayed | ||

| + | <syntaxhighlight lang="python"> | ||

| + | #info for each particular user | ||

| + | employees_info = { | ||

| + | "John":{ | ||

| + | "salary":"10k", | ||

| + | "deptid": 1 | ||

| + | }, | ||

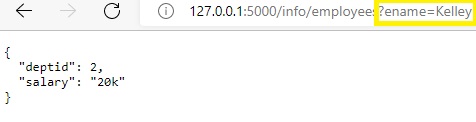

| + | "Kelley":{ | ||

| + | |||

| + | "salary":"20k", | ||

| + | "deptid": 2 | ||

| + | } | ||

| + | } | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | * Create employee class and add onto the root url | ||

| + | We will query the data using query requests with the key being “ename” and value being the name you want to fetch. | ||

| + | |||

| + | <syntaxhighlight lang="python"> | ||

| + | class Employee(Resource): | ||

| + | def get(self): | ||

| + | if request.args: | ||

| + | if "ename" not in request.args.keys(): | ||

| + | message = {"msg":"only use ename as query parameter"} | ||

| + | return jsonify(message) | ||

| + | emp_name = request.args.get("ename") | ||

| + | if emp_name in employees_info.keys(): | ||

| + | return jsonify(employees_info[emp_name]) | ||

| + | return jsonify({"msg":"no employee with this name found"}) | ||

| + | return jsonify(employees_info) | ||

| + | |||

| + | api.add_resource(Employee, "/info/employees") | ||

| + | |||

| + | if __name__ == '__main__': | ||

| + | app.run(debug=True) | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | |||

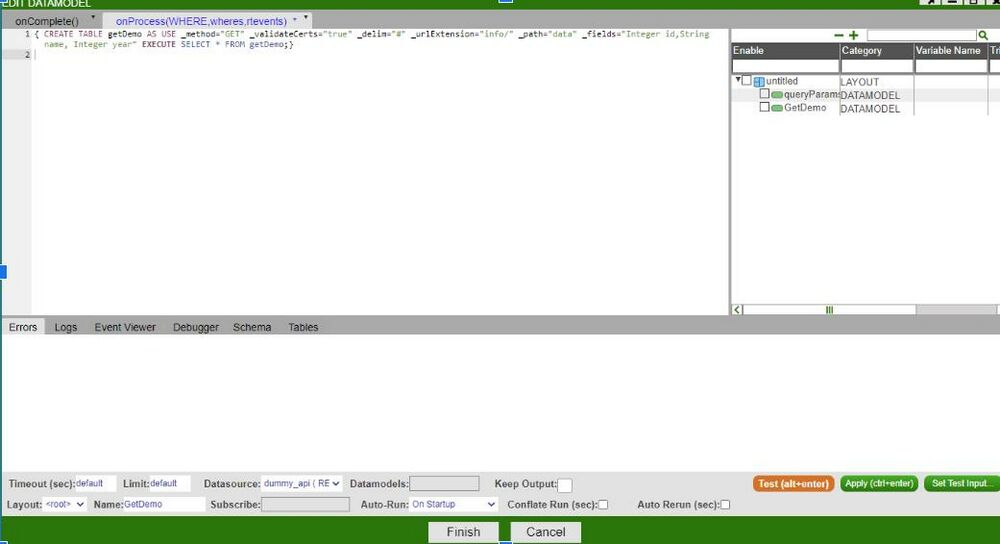

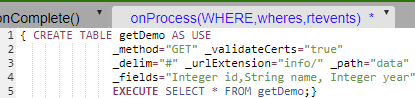

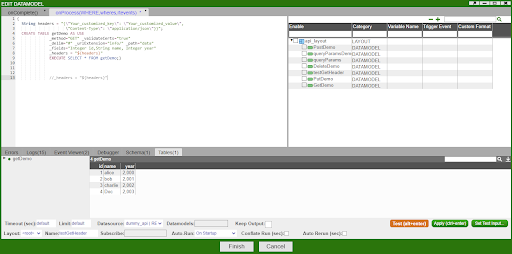

| + | == GET method == | ||

| + | === Example: simple GET method with nested values and paths === | ||

| + | <syntaxhighlight lang="sql"> | ||

| + | CREATE TABLE getDemo AS USE _method="GET" _validateCerts="true" _delim="#" _urlExtension="info/" _path="data" _fields="Integer id,String name, Integer year" EXECUTE SELECT * FROM getDemo; | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | ==== Overview ==== | ||

| + | |||

| + | [[File:GET.jpg|1000px]] | ||

| + | |||

| + | ==== The query that we are using in this example ==== | ||

| + | |||

| + | [[File:Script1.png]] | ||

| + | |||

| + | One can use '''_urlExtension=''' directive to specify any endpoint information added onto the root url. In this example, you can use '''_urlExtension="info/" ''' to navigate you to the following url address, which, corresponds to this url address: http://127.0.0.1:5000/info/ | ||

| + | |||

| + | [[File:url2.png]] | ||

| + | |||

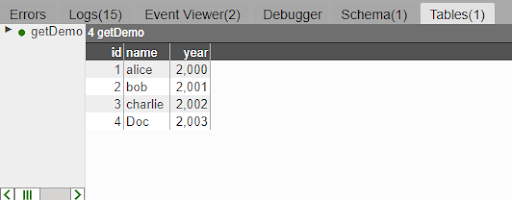

| + | ==== The result table “getDemo” being returned: ==== | ||

| + | |||

| + | [[File:Table2.png]] | ||

| + | |||

| + | === Example2: GET method with query parameters === | ||

| + | Now let’s attach a new endpoint “/employees” onto the previous url. | ||

| + | The new url now is: 127.0.0.1:5000/info/employees | ||

| + | |||

| + | [[File:Employee endpoint.png]] | ||

| + | |||

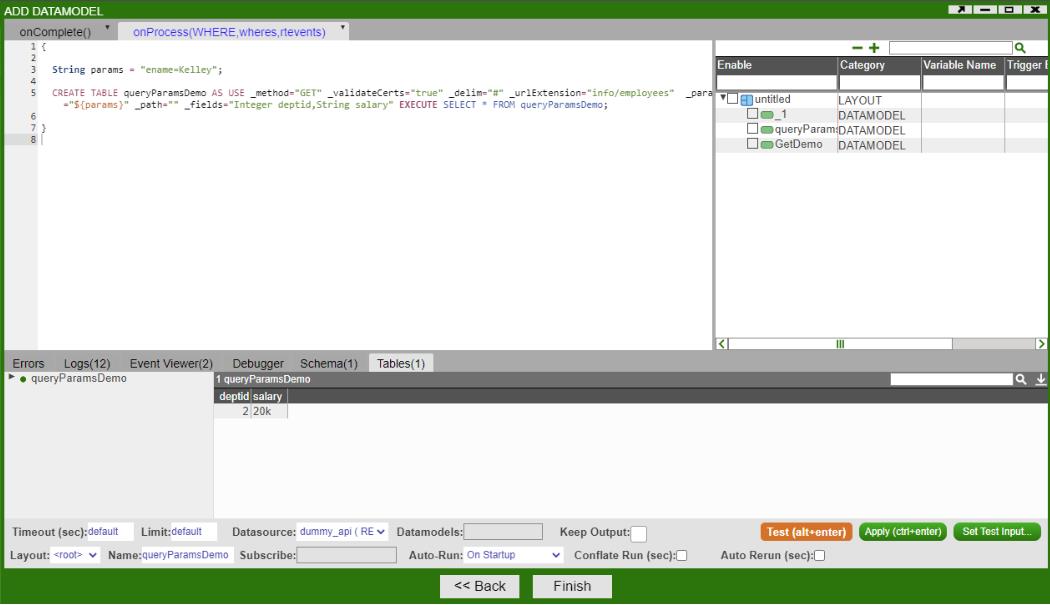

| + | Suppose we want to get the employee information for the employer named “John”. We can use query parameters to achieve this. On the AMI, we can configure our data modeler like this: | ||

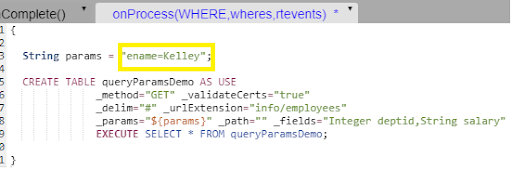

| + | |||

| + | <syntaxhighlight lang="sql"> | ||

| + | { | ||

| + | String params = "ename=Kelley"; | ||

| + | |||

| + | CREATE TABLE queryParamsDemo AS USE | ||

| + | _method="GET" _validateCerts="true" | ||

| + | _delim="#" _urlExtension="info/employees" | ||

| + | _params="${params}" _path="" _fields="Integer deptid,String salary" | ||

| + | EXECUTE SELECT * FROM queryParamsDemo; | ||

| + | } | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | ==== Overview ==== | ||

| + | |||

| + | [[File:GETQ.jpg]] | ||

| + | |||

| + | ==== The query that we are using in this example ==== | ||

| + | [[File:Demo2.png]] | ||

| + | |||

| + | Note that this is the same as to create a string for appending to the end of the url as you would in a GET request (''' "?param1=val1¶m2=value2" ''') and assign the string to “_params”. | ||

| + | |||

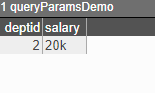

| + | ==== The result table “queryParamsDemo” being returned ==== | ||

| + | |||

| + | [[File:Table3.png|200px]] | ||

| + | |||

| + | The result corresponds to the url: 127.0.0.1:5000/info/employees?ename=Kelley | ||

| + | |||

| + | [[File:QueryRes.jpg]] | ||

| + | |||

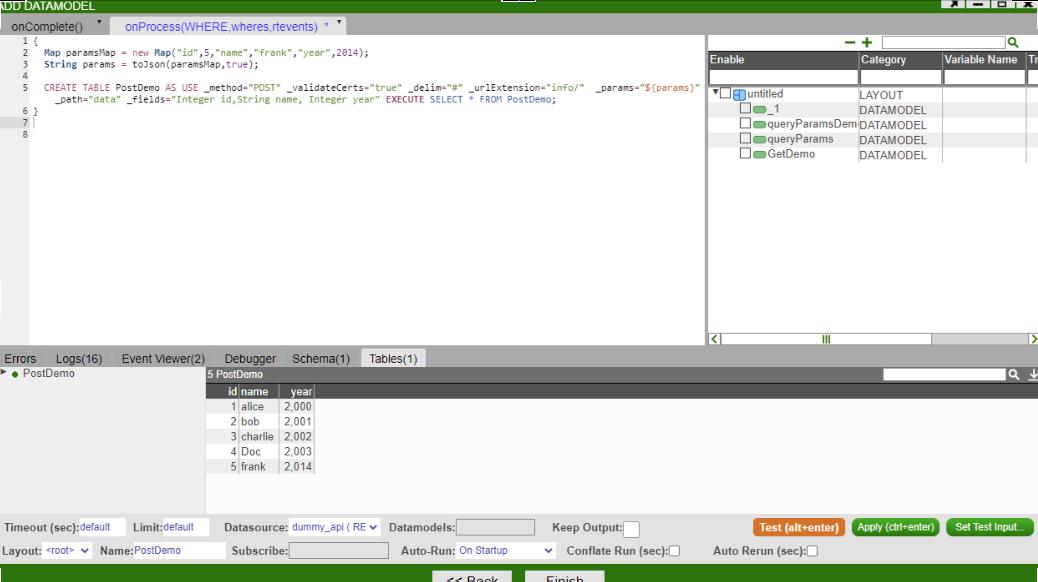

| + | == POST method with nested values == | ||

| + | One can also use the POST method to add new information to the existing data. | ||

| + | * We would use '''_path="" ''' to specify what you want your table to be based on. For the above example where the data is nested, if you want your table to be based on ''' "data" ''', then you would set ''' _path="data" ''' . | ||

| + | |||

| + | [[File:POSTDiagram.png]] | ||

| + | |||

| + | * We use the ''' _fields="" ''' to specify the schema of your table. For this example, you would set ''' _fields="Integer id,String name, Integer year" ''' to grab all the attributes from the data path. | ||

| + | * We use the ''' _params="${params}" ''' to specify the new row information you want to insert into the existing table in the format of JSON. | ||

| + | The example shows how you can insert a new row record ''' Map("id",5,"name","frank","year",2014) ''' into the existing table. <br> | ||

| + | Here is how you can configure the example in the AMI: | ||

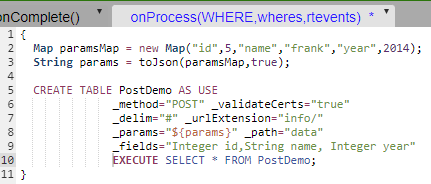

| + | <syntaxhighlight lang="sql"> | ||

| + | { | ||

| + | Map paramsMap = new Map("id",5,"name","frank","year",2014); | ||

| + | String params = toJson(paramsMap,true); | ||

| + | |||

| + | CREATE TABLE PostDemo AS USE _method="POST" _validateCerts="true" _delim="#" _urlExtension="info/" _params="${params}" _path="data" _fields="Integer id,String name, Integer year" EXECUTE SELECT * FROM PostDemo; | ||

| + | } | ||

| + | |||

| + | </syntaxhighlight> | ||

| + | |||

| + | === Overview === | ||

| + | |||

| + | [[File:POST.jpg]] | ||

| + | |||

| + | |||

| + | === The query we are using in this example: === | ||

| + | [[File:AMIScript POST.png]] | ||

| + | |||

| + | |||

| + | Note: For POST method: params (from “_params”) will be passed in as a JSON format in the request body. | ||

| + | |||

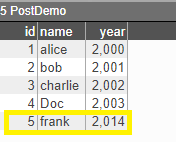

| + | === The table “PostDemo” being returned === | ||

| + | |||

| + | [[File:POSTRes.png]] | ||

| + | |||

| + | The new record is shown on the browser after POST method.<br> | ||

| + | |||

| + | [[File:POSTBrowserRes.png]] | ||

| + | |||

| + | |||

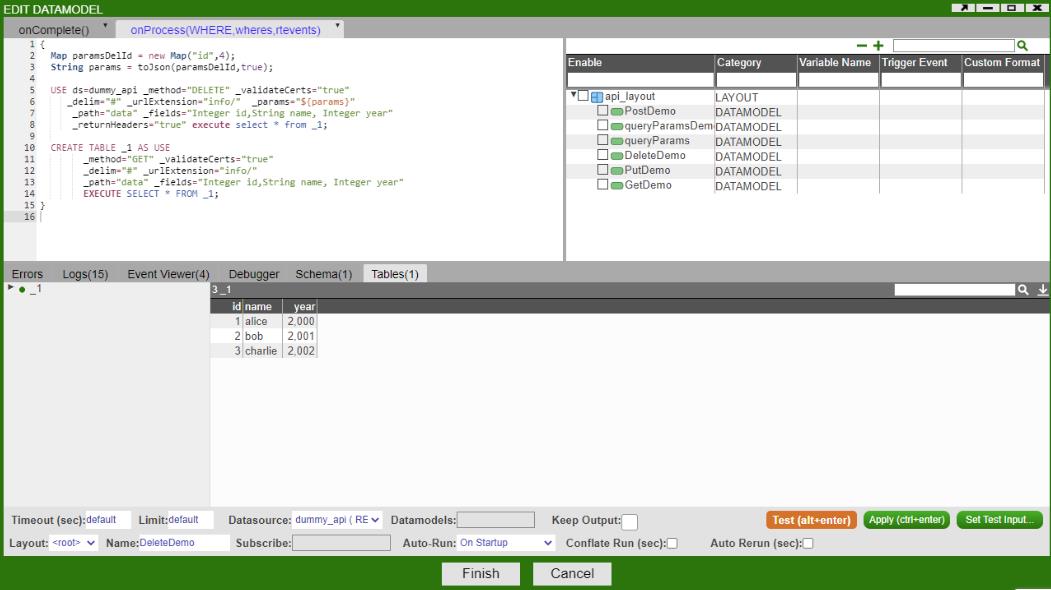

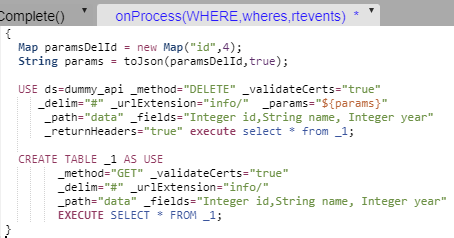

| + | == DELETE method == | ||

| + | |||

| + | One can use '''DELETE''' method to remove specific data record given, say the id of the particular data. If we want to remove the data record with the id of 4 in the ''' “data” ''' path, here is how you can configure your data modeler. | ||

| + | |||

| + | <syntaxhighlight lang="sql"> | ||

| + | { | ||

| + | Map paramsDelId = new Map("id",4); | ||

| + | String params = toJson(paramsDelId,true); | ||

| + | |||

| + | USE ds=dummy_api _method="DELETE" _validateCerts="true" | ||

| + | _delim="#" _urlExtension="info/" _params="${params}" | ||

| + | _path="data" _fields="Integer id,String name, Integer year" | ||

| + | _returnHeaders="true" execute select * from _1; | ||

| + | |||

| + | CREATE TABLE _1 AS USE | ||

| + | _method="GET" _validateCerts="true" | ||

| + | _delim="#" _urlExtension="info/" | ||

| + | _path="data" _fields="Integer id,String name, Integer year" | ||

| + | EXECUTE SELECT * FROM _1; | ||

| + | } | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | === Overview === | ||

| + | [[File:DELETEOverview.jpg]] | ||

| + | |||

| + | === The query we are using in this example === | ||

| + | [[File:DetailedScriptDELETE.png]] | ||

| + | |||

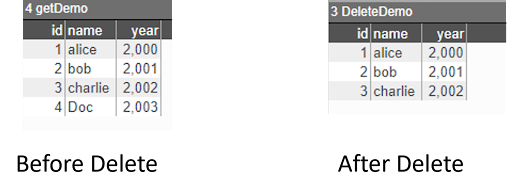

| + | === The returned tables “DeleteDemo” before and after “DELETE” method === | ||

| + | |||

| + | [[File:TableCompareBeforeAfterDELETE.png]] | ||

| + | |||

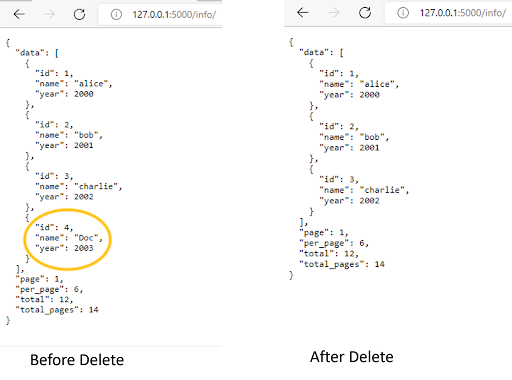

| + | === The results on the web browser before and after DELETE method === | ||

| + | |||

| + | [[File:BrowserTableCompareBeforeAfterDelete.png]] | ||

| + | |||

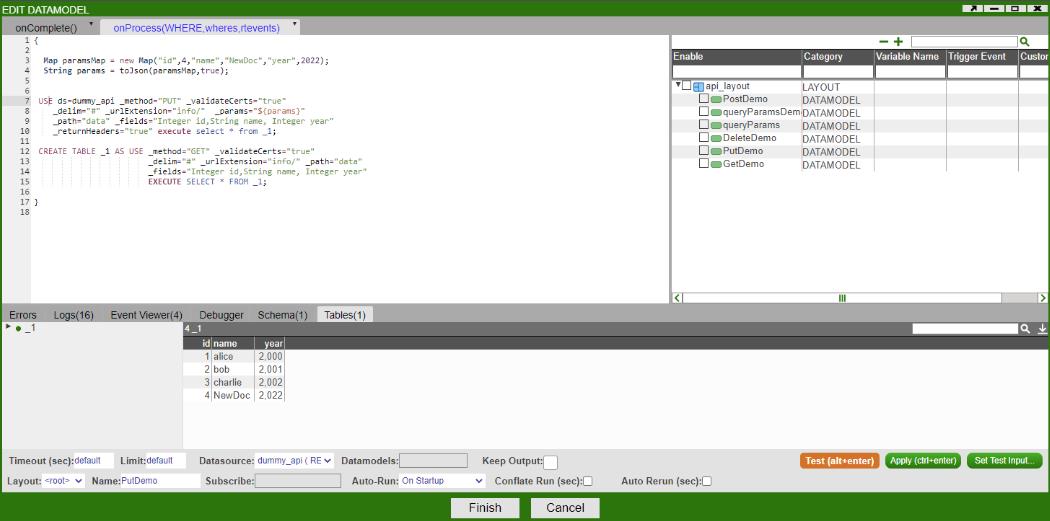

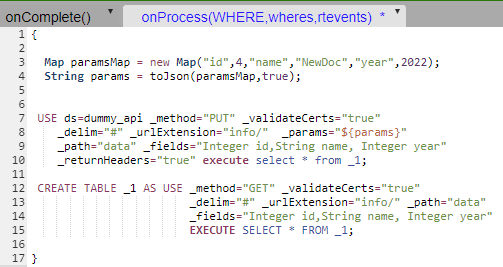

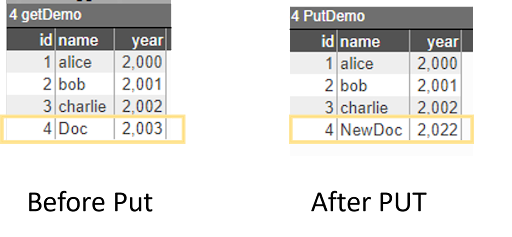

| + | == PUT method == | ||

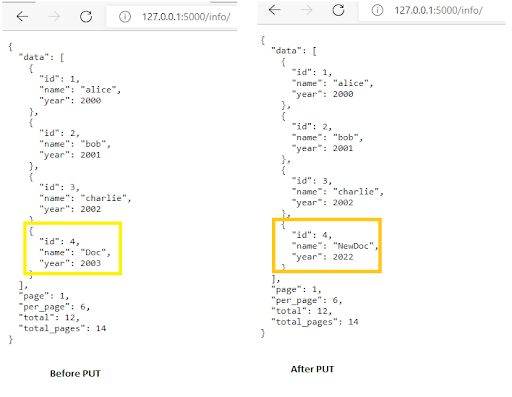

| + | One can use '''PUT''' method to update the existing data record (usually by data id). The example below demonstrates how you can update the data record where '''id''' is equal to '''4''' to '''Map("id",4,"name","NewDoc","year",2022)''';<br> | ||

| + | Here is how you can configure PUT method in AMI:<br> | ||

| + | |||

| + | <syntaxhighlight lang="sql"> | ||

| + | { | ||

| + | Map paramsMap = new Map("id",4,"name","NewDoc","year",2022); | ||

| + | String params = toJson(paramsMap,true); | ||

| + | |||

| + | |||

| + | USE ds=dummy_api _method="PUT" _validateCerts="true" | ||

| + | _delim="#" _urlExtension="info/" _params="${params}" | ||

| + | _path="data" _fields="Integer id,String name, Integer year" | ||

| + | _returnHeaders="true" execute select * from _1; | ||

| + | |||

| + | CREATE TABLE _1 AS USE _method="GET" _validateCerts="true" | ||

| + | _delim="#" _urlExtension="info/" _path="data" | ||

| + | _fields="Integer id,String name, Integer year" | ||

| + | EXECUTE SELECT * FROM _1; | ||

| + | } | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | === Overview === | ||

| + | [[File:PUTOverview.jpg]]\ | ||

| + | |||

| + | === The query used in this example === | ||

| + | |||

| + | [[File:DetailedScriptPUT.png]] | ||

| + | |||

| + | === The table “PutDemo” being returned before and after PUT method === | ||

| + | |||

| + | [[File:TableCompareBeforeAfterPUT.png]] | ||

| + | |||

| + | === The result on your API browser gets updated === | ||

| + | |||

| + | [[File:TableCompareBeforeAfterPUTOnWeb.png]] | ||

| + | |||

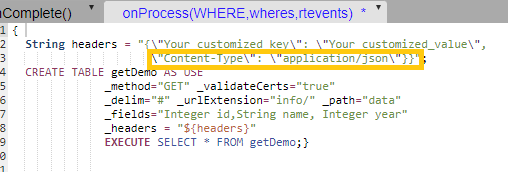

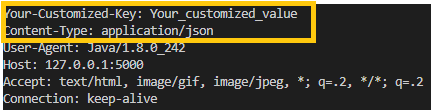

| + | == _headers() == | ||

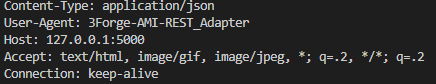

| + | One can use _headers() to customize the information in the header as required. By default, if the _headers() is not specified, it will return the header like this:<br> | ||

| + | [[File:HeaderExample.png]] | ||

| + | |||

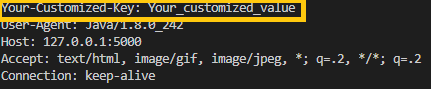

| + | If you were to customize your own header, you can configure your ami script like this:<br> | ||

| + | |||

| + | <syntaxhighlight lang="sql"> | ||

| + | { | ||

| + | String headers = "{\"Your_customized_key\": \"Your_customized_value\", | ||

| + | \"Content-Type\": \"application/json\"}}"; | ||

| + | CREATE TABLE getDemo AS USE | ||

| + | _method="GET" _validateCerts="true" | ||

| + | _delim="#" _urlExtension="info/" _path="data" | ||

| + | _fields="Integer id,String name, Integer year" | ||

| + | _headers = "${headers}" | ||

| + | EXECUTE SELECT * FROM getDemo;} | ||

| + | |||

| + | </syntaxhighlight> | ||

| + | |||

| + | === Overview === | ||

| + | [[File:HeaderOverview.png]] | ||

| + | |||

| + | === The query used in this example === | ||

| + | |||

| + | [[File:DetailedScriptHeaders.png]] | ||

| + | |||

| + | '''Note''': you need to include the second dictionary ''' \"Content-Type\": \"application/json\" ''' in your header, otherwise your customized ''' \"Your_customized_key\": \"Your_customized_value\" ''' will overwrite the default ''' \"Content-Type\": \"application/json\" ''' in the header. | ||

| + | |||

| + | === Console output for header === | ||

| + | |||

| + | [[File:UndesiredOutput.png|Undesired output with missing column-type]] | ||

| + | <br> | ||

| + | Undesired output with missing column-type<br> | ||

| + | |||

| + | |||

| + | [[File:DesiredOutput.png|Desired output]] | ||

| + | <br> | ||

| + | Desired output | ||

| + | |||

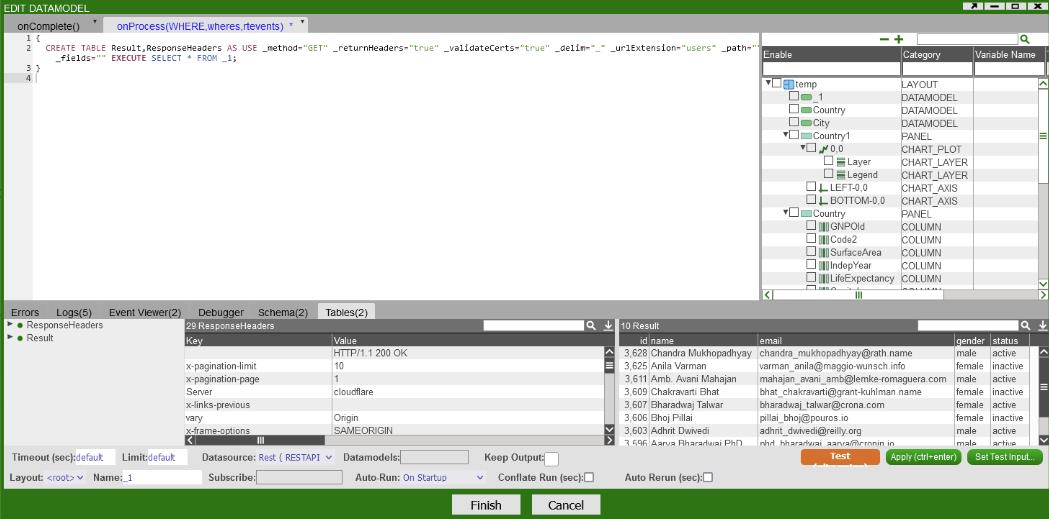

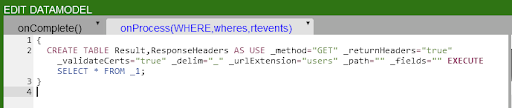

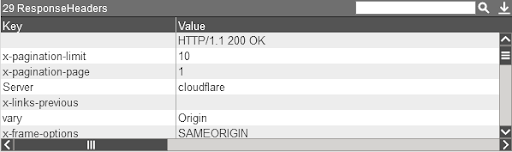

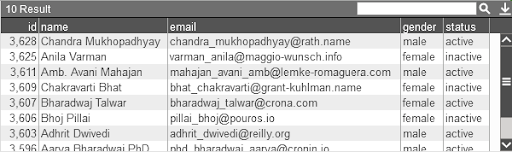

| + | == ReturnHeaders Directive == | ||

| + | When '''_returnHeaders''' is set to true, the query will return two tables, one the results of your query and the other the responseHeaders. When it is enabled, the rest adapter will handle any IOExceptions. If your result table has one column which is named Error, there was an error with the Rest Service that you are querying. | ||

| + | |||

| + | === Example 1: Here's an example usage of returnHeaders using the below example === | ||

| + | |||

| + | <syntaxhighlight lang="sql"> | ||

| + | |||

| + | { | ||

| + | CREATE TABLE Result,ResponseHeaders AS USE _method="GET" _returnHeaders="true" _validateCerts="true" _delim="_" _urlExtension="users" _path="" _fields="" EXECUTE SELECT * FROM _1; | ||

| + | } | ||

| + | |||

| + | </syntaxhighlight> | ||

| + | |||

| + | ==== Example 1 Overview ==== | ||

| + | |||

| + | [[File:E1Overview.jpg]] | ||

| + | |||

| + | ==== The query that we are using in this example ==== | ||

| + | |||

| + | [[File:E1DetailAMI.png]] | ||

| + | |||

| + | ==== The response headers being returned ==== | ||

| + | |||

| + | [[File:ResponseHeader.png]] | ||

| + | |||

| + | ==== The result being returned ==== | ||

| + | |||

| + | [[File:E1res.png]] | ||

| + | |||

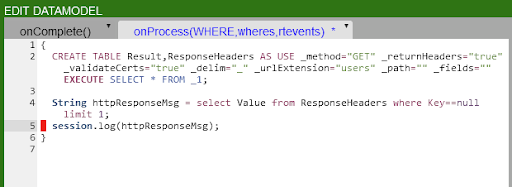

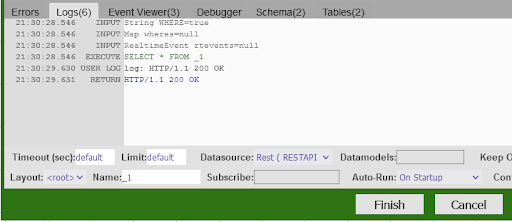

| + | === Example 2: How to get the HTTP response code from the responseHeaders === | ||

| + | This section will show you how to extract the Http Response Message from the Response Headers and how to get the response code from that message. We will use example 4.1 as the base for this example <br> | ||

| + | |||

| + | Add the following to example 4.1:<br> | ||

| + | |||

| + | <syntaxhighlight lang="sql"> | ||

| + | String httpResponseMsg = select Value from ResponseHeaders where Key==null; | ||

| + | session.log(httpResponseMsg); | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | ==== The datamodel in this example ==== | ||

| + | |||

| + | [[File:E2DM.png]] | ||

| + | |||

| + | Note that we have a limit 1, if the query returns more than one row, and we only select one column, it will return a List.<br> | ||

| + | For additional information, if you select one row with multiple columns the query will return a Map, for all other queries it will return a Table.<br> | ||

| + | |||

| + | ==== The result of that query: ==== | ||

| + | |||

| + | [[File:QueryResForReturnHeader.png]] | ||

| + | |||

| + | |||

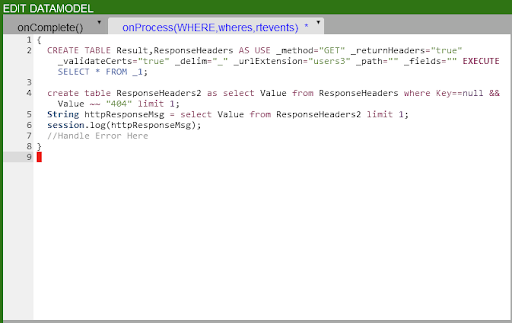

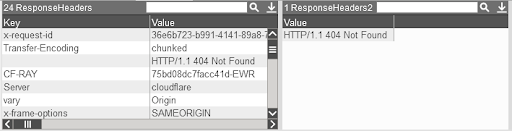

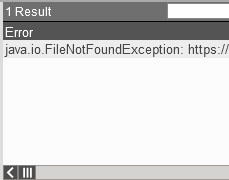

| + | === Example 3: Handling Errors, 404 Page Not Found === | ||

| + | When you have _returnHeaders set to true, RestApi errors will now be caught and will be up to the developer to handle. The below is an example of a 404 error. When there is an error, the error will be returned in the result table with a single column named '''`Error`'''.<br> | ||

| + | |||

| + | '''The datamodel in this example:''' | ||

| + | |||

| + | <syntaxhighlight lang="sql"> | ||

| + | { | ||

| + | CREATE TABLE Result,ResponseHeaders AS USE _method="GET" _returnHeaders="true" _validateCerts="true" _delim="_" _urlExtension="users3" _path="" _fields="" EXECUTE SELECT * FROM _1; | ||

| + | |||

| + | create table ResponseHeaders2 as select Value from ResponseHeaders where Key==null && Value ~~ "404" limit 1; | ||

| + | String httpResponseMsg = select Value from ResponseHeaders2 limit 1; | ||

| + | session.log(httpResponseMsg); | ||

| + | // Handle Error Here | ||

| + | } | ||

| + | </syntaxhighlight> | ||

| + | |||

| + | ==== The datamodel in this example contains `~~` syntax, this is 3forge's Simplified Text Matching, see for more information ==== | ||

| + | https://docs.3forge.com/mediawiki/AMI_Script#SimplifiedText_Matching | ||

| + | |||

| + | [[File:E3O.png]] | ||

| + | |||

| + | ==== The Response Headers and the Http Response Message ==== | ||

| + | |||

| + | [[File:HttpResponseMSG.png]] | ||

| + | |||

| + | |||

| + | ==== The Result Table now returns the error message ==== | ||

| + | |||

| + | [[File:ErrorMsg.png]] | ||

| + | |||

| + | =AMI Deephaven Adapter= | ||

| + | |||

| + | '''Requirements''' | ||

| + | |||

| + | 1. Docker | ||

| + | |||

| + | 2. JDK 17 | ||

| + | |||

| + | ==Getting Started with Deephaven== | ||

| + | |||

| + | 1. Install sample python-example containers from Deephaven from [https://deephaven.io/core/docs/tutorials/quickstart/ Quick start Install Deephaven] | ||

| + | |||

| + | a. ''curl'' | ||

| + | |||

| + | https://raw.githubusercontent.com/deephaven/deephaven-core/main/containers/python-examples/base/docker-compose.yml -O | ||

| + | |||

| + | b. ''docker-compose'' pull | ||

| + | |||

| + | c. ''docker-compose'' up | ||

| + | |||

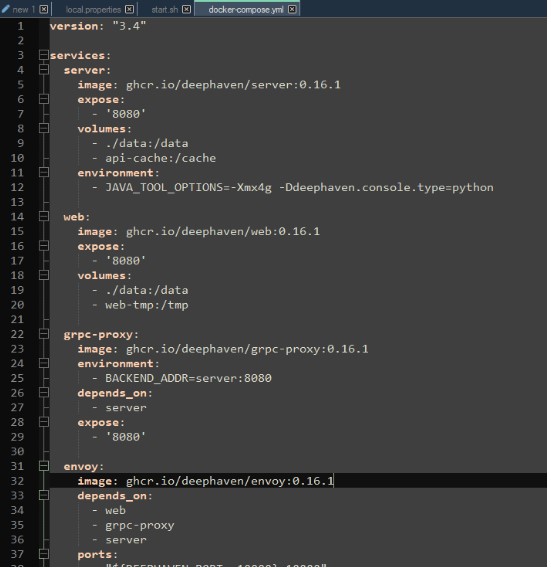

| + | 2. If you run into errors with the grpc container, downgrade the containers in the "docker-compose.yml" file to 0.16.1 | ||

| + | |||

| + | [[File:Deephaven Feedhandler.jpg]] | ||

| + | |||

| + | 3. Go to http://localhost:10000/ide/ on the browser | ||

| + | |||

| + | 4. Execute the following commands in the deephaven ide | ||

| + | |||

| + | <pre>from deephaven import read_csv | ||

| + | |||

| + | seattle_weather = read_csv("https://media.githubusercontent.com/media/deephaven/examples/main/GSOD/csv/seattle.csv") | ||

| + | |||

| + | from deephaven import agg | ||

| + | |||

| + | hi_lo_by_year = seattle_weather.view(formulas=["Year = yearNy(ObservationDate)", "TemperatureF"])\ | ||

| + | .where(filters=["Year >= 2000"])\ | ||

| + | .agg_by([\ | ||

| + | agg.avg(cols=["Avg_Temp = TemperatureF"]),\ | ||

| + | agg.min_(cols=["Lo_Temp = TemperatureF"]),\ | ||

| + | agg.max_(cols=["Hi_Temp = TemperatureF"]) | ||

| + | ],\ | ||

| + | by=["Year"]) | ||

| + | </pre> | ||

| + | |||

| + | |||

| + | ==Installing the datasource plugin to AMI== | ||

| + | |||

| + | 1. Place "DeephavenFH.jar" and all other jar files in the "dependencies" directory under "/amione/lib/" | ||

| + | |||

| + | 2. Copy the properties from the ''local.properties'' file to your own ''local.properties'' | ||

| + | |||

| + | 3. For JDK17 compatibility, use the attached start.sh file or add the following parameters to the java launch command | ||

| + | |||

| + | <pre> --add-opens java.base/java.lang=ALL-UNNAMED --add-opens java.base/java.util=ALL-UNNAMED --add-opens java.base/java.text=ALL-UNNAMED --add-opens java.base/sun.net=ALL-UNNAMED --add-opens java.management/sun.management=ALL-UNNAMED --add-opens java.base/sun.security.action=ALL-UNNAMED </pre> | ||

| + | |||

| + | 4. Add the following to the java launch parameters to the start.sh file as well | ||

| + | |||

| + | <pre> -DConfiguration.rootFile=dh-defaults.prop </pre> | ||

| + | |||

| + | 5. Launch AMI (from start.sh.script) | ||

| + | |||

| + | ==Creating queries in the datamodel== | ||

| + | |||

| + | Queries in the datamodel (after execute keyword) will be sent to the deephaven application as a console command. Any table stated/created in the deephaven query will be returned as an AMI table. | ||

| + | |||

| + | ''<span style="color: blue;">create table testDHTable as execute sample = hi_lo_by_year.where(filters=["Year = 2000"]);</span>'' | ||

| + | |||

| + | This creates a table by the name of sample in deephaven and populates the created ''testDHTable'' using the sample table. | ||

| + | |||

| + | ''<span style="color: blue;">create table testDHTable as execute hi_lo_by_year;</span>'' | ||

| + | |||

| + | This will create a table ''testDHTable'' and populate it with the ''hi_lo_by_year'' table from deephaven. | ||

| + | |||

| + | =AMI Snowflake Adapter= | ||

| + | |||

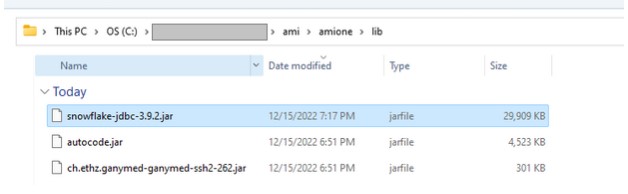

| + | Prerequisites: Get the Snowflake JDBC jar. | ||

| + | |||

| + | 1.0 Add Snowflake JDBC jar file to your 3forge AMI lib directory. | ||

| + | |||

| + | First go to the following directory: ami/amione/lib and check whether the snowflake-jdbc-<version>.jar file is already there. If it already exists and you want to update the snowflake-jdbc jar, remove the old jar and move the new jar into that directory. | ||

| + | |||

| + | [[File:Snowflake.01.jpg]] | ||

| + | |||

| + | 1.1 Restart AMI | ||

| + | |||

| + | Run the stop.sh or stop.bat file in the scripts directory and then run start.sh or start.bat. If you are running on Windows you may use AMI_One.exe | ||

| + | |||

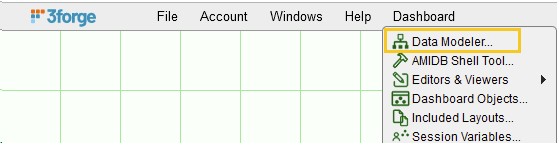

| + | 1.2 Go to the Dashboard Datamodeler | ||

| + | |||

| + | [[File:Snowflake.02.jpg]] | ||

| + | |||

| + | 1.3 Click Attach Datasource & then choose the Generic JDBC Adapter | ||

| + | |||

| + | [[File:Snowflake.03.jpg]] | ||

| + | |||

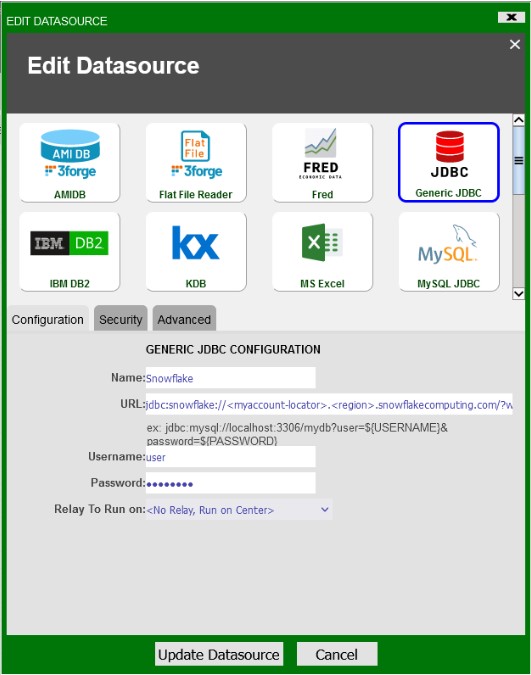

| + | 1.4 Setup the Snowflake Adapter | ||

| + | |||

| + | [[File:Snowflake.04.jpg]] | ||

| + | |||

| + | Fill in the fields, Name, Url, User and if required Password. | ||

| + | |||

| + | The url format is as follows: | ||

| + | |||

| + | <pre> | ||

| + | jdbc:snowflake://<myaccount-locator>.<region>.snowflakecomputing.com/?user=${USERNAME}&password=${PASSWORD}&warehouse=<active-warehouse>&db=<database>&schema=<schema>&role=<role> | ||

| + | |||

| + | The basic Snowflake JDBC connection parameters: | ||

| + | user - the login name of the user | ||

| + | password - the password | ||

| + | warehouse - the virtual warehouse for which the specified role has privileges | ||

| + | db - the database for which the specified role has privileges | ||

| + | schema - the schema for which the specified role has privileges | ||

| + | role - the role for which the specified user has been assigned to | ||

| + | </pre> | ||

| + | |||

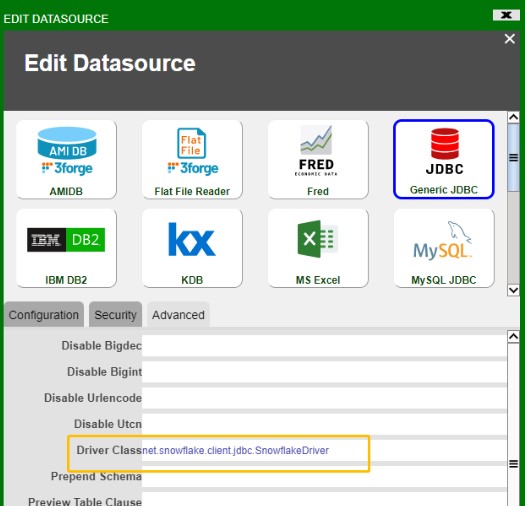

| + | 1.5 Setup Driver Class in the "Advanced" Tab | ||

| + | |||

| + | Next, go to the “Advanced”, in the field Driver Class, add: | ||

| + | |||

| + | <pre> net.snowflake.client.jdbc.SnowflakeDriver </pre> | ||

| + | |||

| + | [[File:Snowflake.05.jpg]] | ||

| + | |||

| + | Once the fields are filled, click Add Datasource. You are now ready to send queries to Snowflake via AMI. | ||

| + | |||

| + | 2. First Query - Show Tables | ||

| + | |||

| + | Let’s write our first query to get a list of tables that we have permission to view. | ||

| + | |||

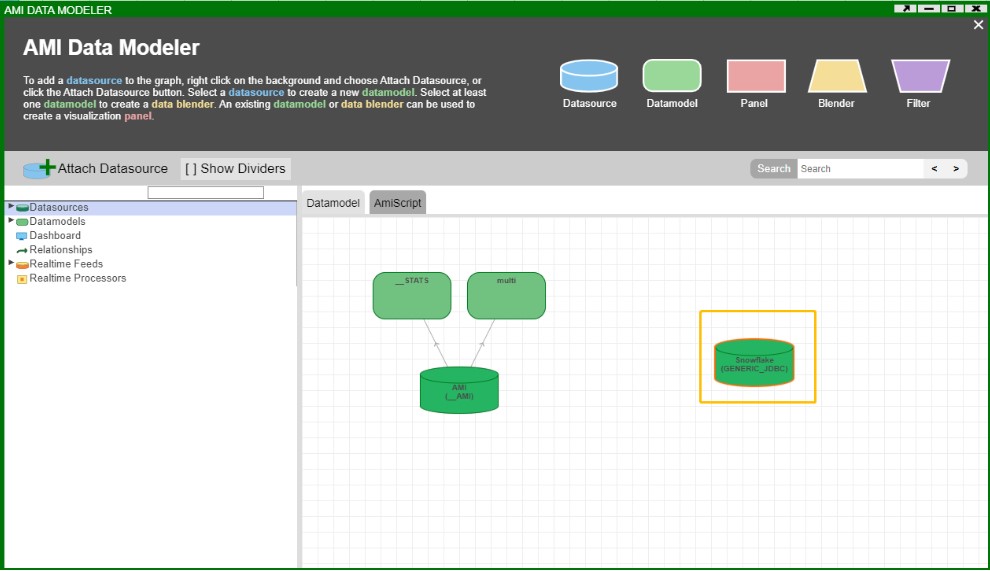

| + | 2.1 Double click the datasource “Snowflake” that we just set up | ||

| + | |||

| + | [[File:Snowflake.06.jpg]] | ||

| + | |||

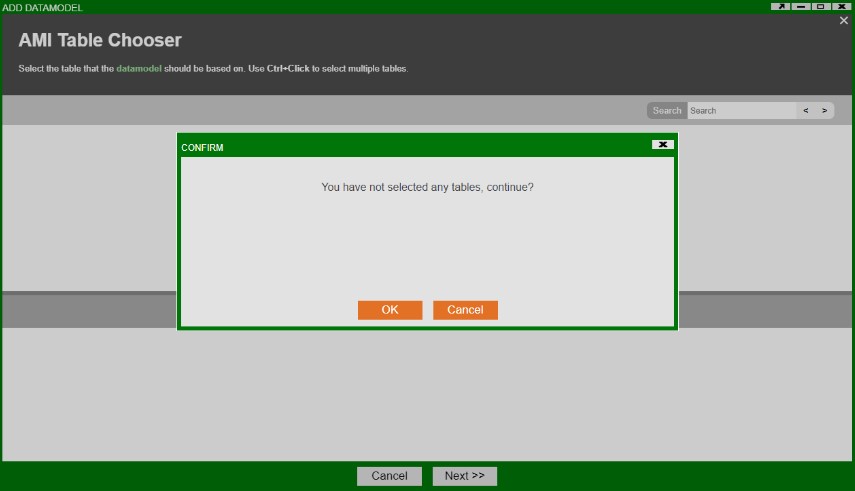

| + | 2.2 Click Next & then OK | ||

| + | |||

| + | [[File:Snowflake.07.jpg]] | ||

| + | |||

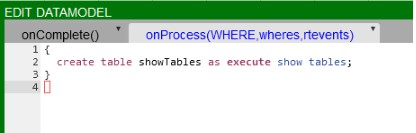

| + | 2.3 Show Tables Query | ||

| + | |||

| + | In the Datamodel, let's type a quick query to show the tables available to us. | ||

| + | |||

| + | [[File:Snowflake.08.jpg]] | ||

| + | |||

| + | Here's the script for the datamodel: | ||

| + | |||

| + | <pre> | ||

| + | { | ||

| + | create table showTables as execute show tables; | ||

| + | } | ||

| + | </pre> | ||

| + | |||

| + | Hit the orange test button. And you will see something like this: | ||

| + | |||

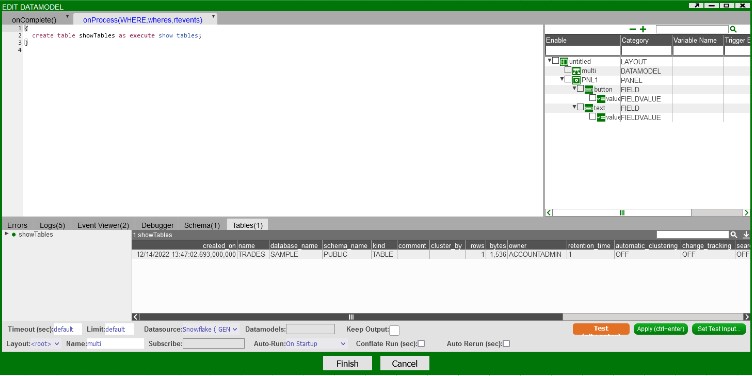

| + | [[File:Snowflake.09.jpg]] | ||

| + | |||

| + | This created an AMI table called “showTables”, which returns the results of the query "show tables" from the Snowflake Database. | ||

| + | |||

| + | Here in the query, the “USE” keyword is indicating that we are directing the query to an external datasource, which in this case is Snowflake. | ||

| + | |||

| + | “EXECUTE” keyword indicates that whatever query we are making after EXECUTE should follow the query syntax from Snowflake. | ||

| + | |||

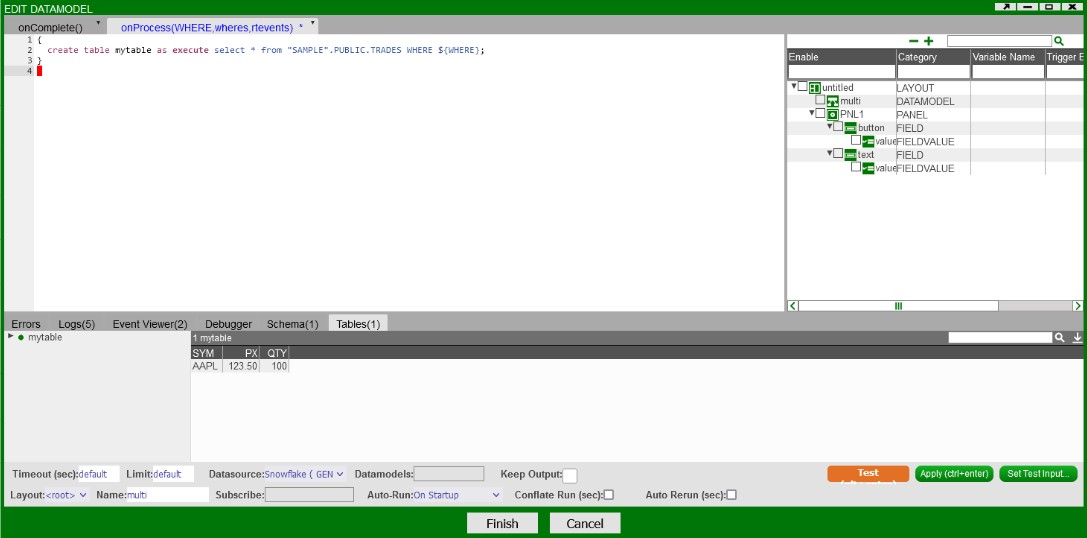

| + | 3.0 Querying Tables. | ||

| + | |||

| + | Just like you would in SnowSql you can query a table using it's fully-qualified schema object name or the table name that is available in your current database schema | ||

| + | |||

| + | Ex. | ||

| + | <pre> | ||

| + | { | ||

| + | create table mytable as execute select * from "DATABASE".SCHEMA.TABLENAME WHERE ${WHERE}; | ||

| + | } | ||

| + | </pre> | ||

| + | |||

| + | or | ||

| + | <pre> | ||

| + | { | ||

| + | create table mytable as execute select * from TABLENAME WHERE ${WHERE}; | ||

| + | } | ||

| + | </pre> | ||

| + | |||

| + | Here's an example | ||

| + | |||

| + | [[File:Snowflake.10.jpg]] | ||

| + | |||

| + | 4.0 Snowflake Error Messages | ||

| + | |||

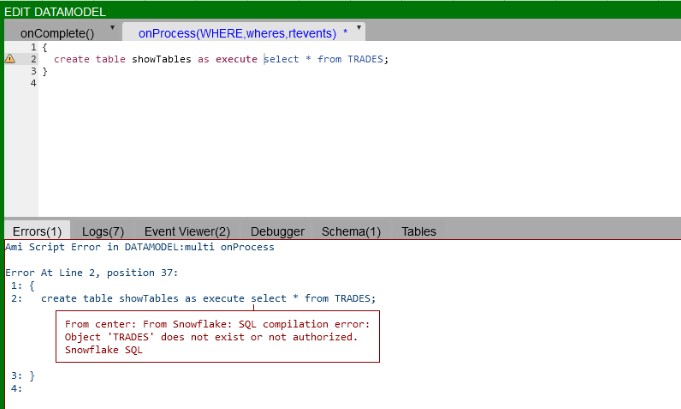

| + | 4.1 SQL Compilation error: Object does not exist or not authorized | ||

| + | |||

| + | If you get the following error: | ||

| + | |||

| + | <pre> | ||

| + | From center: From Snowflake: SQL compilation error: | ||

| + | Object 'objectname' does not exist or not authorized. | ||

| + | Snowflake SQL | ||

| + | </pre> | ||

| + | It could be one of two things, the table or object you are trying to access does not exist in your database or schema that you have selected. | ||

| + | |||

| + | The other possibility is that your user or role does not have the permissions required to access that table or object. | ||

| + | |||

| + | [[File:Snowflake.11.jpg]] | ||

| + | |||

| + | =Using KDB Functions in AMI Data Model= | ||

| + | <syntaxhighlight lang="amiscript"> | ||

| + | use ds=myKDB execute myFunc[arg1\;arg2...]; | ||

| + | </syntaxhighlight> | ||

| + | '''Be sure to escape semicolon (;) with backslash (\)''' | ||

| + | |||

| + | = Supported Redis Commands in 3Forge AMI = | ||

| + | This document lists the accepted return types on AMI for every supported Redis command.<br> | ||

| + | |||

| + | 1. The format for each command is as follows:<br> | ||

| + | Command_name parameters … <br> | ||

| + | - accepted_return_types <br> | ||

| + | - Additional comments if necessary. <br> | ||

| + | 2. Some return types depend on the inclusion of optional parameters.<br> | ||

| + | 3. “numeric” in this document means if a Redis command’s output is a number, then you may use int/float/double/byte/short/long, bounded by their respective range defined by Java, in AMI web. <br> | ||

| + | 4. Almost every command’s accepted return has String, but String is not iterable in AMI.<br> | ||

| + | 5. String (“OK”) or numeric (1/0) means whatever is in the parenthesis is the default response if the query executes without error.<br> | ||

| + | 6. Square bracket [] indicates optional parameters. A pike | means choose 1 among many.<br> | ||

| + | 7. AMI redis adapter’s syntax is case '''insensitive.'''<br> | ||

| + | |||

| + | |||

| + | |||

| + | '''APPEND''' key value <br> | ||

| + | -numeric/string<br> | ||

| + | '''AUTH''' [username] password<br> | ||

| + | -string<br> | ||

| + | '''BITCOUNT''' key [ start end [ BYTE | BIT]] <br> | ||

| + | -numeric/string<br> | ||

| + | '''BITOP''' operation destkey key [key ...] <br> | ||

| + | -string/numeric<br> | ||

| + | -Operations are AND OR XOR NOT<br> | ||

| + | '''BITPOS''' key bit [ start [ end [ BYTE | BIT]]]<br> | ||

| + | numeric/string<br> | ||

| + | '''BLMOVE''' source destination LEFT | RIGHT LEFT | RIGHT timeout <br> | ||

| + | string<br> | ||

| + | '''BLPOP''' key [key ...] timeout <br> | ||

| + | string/list<br> | ||

| + | '''BRPOP''' key [key ...] timeout <br> | ||

| + | string/list<br> | ||

| + | |||

| + | '''BZPOPMAX''' key [key ...] timeout <br> | ||

| + | list/string<br> | ||

| + | |||

| + | |||

| + | '''BZPOPMIN''' key [key ...] timeout <br> | ||

| + | list/string<br> | ||

| + | '''COPY''' source destination [DB destination-db] [REPLACE]<br> | ||

| + | string (true/false)<br> | ||

| + | '''DBSIZE''' <br> | ||

| + | numeric/string<br> | ||

| + | '''DECR''' key <br> | ||

| + | numeric/string<br> | ||

| + | '''DECRBY''' key decrement<br> | ||

| + | numeric/string<br> | ||

| + | '''DEL''' key [key ...] <br> | ||

| + | string/numeric (1/0)<br> | ||

| + | '''DUMP''' key <br> | ||

| + | string/binary (logging will not display content, it will say “x bytes”)<br> | ||

| + | '''ECHO''' message <br> | ||

| + | string<br> | ||

| + | '''EVAL''' script numkeys [key [key ...]] [arg [arg ...]] <br> | ||

| + | depends on script<br> | ||

| + | '''EXISTS''' key [key ...] <br> | ||

| + | numeric/string<br> | ||

| + | '''EXPIRE''' key seconds [ NX | XX | GT | LT] <br> | ||

| + | numeric/string<br> | ||

| + | '''EXPIREAT''' key unix-time-seconds [ NX | XX | GT | LT] <br> | ||

| + | numeric/string<br> | ||

| + | '''EXPIRETIME''' key <br> | ||

| + | numeric/string<br> | ||

| + | '''FLUSHALL''' <br> | ||

| + | string (“OK”)<br> | ||

| + | '''FLUSHDB''' [ ASYNC | SYNC] <br> | ||

| + | string (“OK”)<br> | ||

| + | |||

| + | '''GEOADD''' key [ NX | XX] [CH] longitude latitude member [ longitude latitude member ...] <br> | ||

| + | numeric/string<br> | ||

| + | '''GEODIST''' key member1 member2 [ M | KM | FT | MI] <br> | ||

| + | numeric/string<br> | ||

| + | '''GEOHASH''' key member [member ...] <br> | ||

| + | string/list<br> | ||

| + | '''GEOPOS''' key member [member ...]<br> | ||

| + | list/string<br> | ||

| + | '''GEOSEARCH''' key FROMMEMBER member | FROMLONLAT longitude latitude BYRADIUS radius M | KM | FT | MI | BYBOX width height M | KM | FT | MI [ ASC | DESC] [ COUNT count [ANY]] [WITHCOORD] [WITHDIST] [WITHHASH] <br> | ||

| + | list/string<br> | ||

| + | '''GEOSEARCHSTORE''' destination source FROMMEMBER member | FROMLONLAT longitude latitude BYRADIUS radius M | KM | FT | MI | BYBOX width height M | KM | FT | MI [ ASC | DESC] [ COUNT count [ANY]] [STOREDIST] <br> | ||

| + | numeric/string<br> | ||

| + | '''GET''' key <br> | ||

| + | numeric if it is a number, else string<br> | ||

| + | '''GETDEL''' key <br> | ||

| + | numeric (only if value consists of digits)/string <br> | ||

| + | '''GETEX''' key [ EX seconds | PX milliseconds | EXAT unix-time-seconds | PXAT unix-time-milliseconds | PERSIST] <br><br> | ||

| + | string/int/null<br> | ||

| + | '''GETRANGE''' key start end <br> | ||

| + | numeric if it is only digits, else string<br> | ||

| + | '''HDEL''' key field [field ...] <br> | ||

| + | numeric/string<br> | ||

| + | |||

| + | '''HEXISTS''' key field <br> | ||

| + | string (“true”/”false”)<br> | ||

| + | '''HGET''' key field <br> | ||

| + | numeric (only if value consists of digits)/string<br> | ||

| + | '''HGETALL''' key<br> | ||

| + | string/map<br> | ||

| + | '''HINCRBY''' key field increment<br> | ||

| + | string/numeric<br> | ||

| + | '''HINCRBYFLOAT''' key field increment <br> | ||

| + | numeric/string<br> | ||

| + | '''HKEYS''' key <br> | ||

| + | set/string<br> | ||

| + | '''HLEN''' key <br> | ||

| + | numeric/string<br> | ||

| + | '''HMGET''' key field [field ...]<br> | ||

| + | string/list<br> | ||

| + | '''HMSET''' key field value [ field value ...] <br> | ||

| + | string (“OK”)<br> | ||

| + | |||

| + | '''HRANDFIELD''' key [ count [WITHVALUES]] <br> | ||

| + | With COUNT, list/string<br> | ||

| + | Without COUNT, string<br> | ||

| + | '''HSCAN''' key cursor [MATCH pattern] [COUNT count] <br> | ||

| + | list/string<br> | ||

| + | '''HSET''' key field value [ field value ...] <br> | ||

| + | numeric/string<br> | ||

| + | '''HSETNX''' key field value <br> | ||

| + | numeric/string<br> | ||

| + | '''HSTRLEN''' key field <br> | ||

| + | numeric/string<br> | ||

| + | '''HVALS''' key <br> | ||

| + | string/list<br> | ||

| + | '''INCR''' key <br> | ||

| + | numeric/string<br> | ||

| + | '''INCRBY''' key increment <br> | ||

| + | numeric/string<br> | ||

| + | '''INCRBYFLOAT''' key increment <br> | ||

| + | numeric/string<br> | ||

| + | '''INFO''' [section [section ...]] <br> | ||

| + | string<br> | ||

| + | '''KEYS''' pattern <br> | ||

| + | set/string<br> | ||

| + | '''LASTSAVE''' <br> | ||

| + | numeric/string<br> | ||

| + | '''LCS''' key1 key2 [LEN] [IDX] [MINMATCHLEN len] [WITHMATCHLEN] <br> | ||

| + | With no options, e.g. lcs a b, returns string.<br> | ||

| + | With LEN, returns numeric/string.<br> | ||

| + | With IDX and WITHMATCHLEN or just IDX, returns list/string<br> | ||

| + | '''LINDEX''' key index <br> | ||

| + | numeric/string if content is a number, string otherwise.<br> | ||

| + | '''LINSERT''' key BEFORE | AFTER pivot element - string/intLLEN key<br> | ||

| + | string/int<br> | ||

| + | '''LMOVE''' source destination LEFT | RIGHT LEFT | RIGHT <br> | ||

| + | string if element is string, numeric if element is a number.<br> | ||

| + | '''LMPOP''' numkeys key [key ...] LEFT | RIGHT [COUNT count]<br> | ||

| + | list/string<br> | ||

| + | '''LPOP''' key [count] <br> | ||

| + | Without COUNT, string by default, numeric if element is a number. With COUNT, list/string.<br> | ||

| + | '''LPOS''' key element [RANK rank] [COUNT num-matches] [MAXLEN len] - <br> | ||

| + | With COUNT, list/string<br> | ||

| + | Without COUNT, numeric/string if element is a number, else string.<br> | ||

| + | '''LPUSH''' key element [element ...] <br> | ||

| + | numeric/string<br> | ||

| + | '''LPUSHX''' key element [element ...] <br> | ||

| + | numeric/string<br> | ||

| + | '''LRANGE''' key start stop <br> | ||

| + | list/string<br> | ||

| + | '''LREM''' key count element <br> | ||

| + | numeric/string<br> | ||

| + | '''LSET''' key index element <br> | ||

| + | string (“OK”)<br> | ||

| + | '''LTRIM''' key start stop <br> | ||

| + | string (“OK”)<br> | ||

| + | '''MGET''' key [key ...] <br> | ||

| + | list/string<br> | ||

| + | '''MSETNX''' key value [ key value ...] <br> | ||

| + | numeric/string<br> | ||

| + | '''PERSIST''' key <br> | ||

| + | numeric/string<br> | ||

| + | '''PEXPIRE''' key milliseconds [ NX | XX | GT | LT] <br> | ||

| + | numeric/string<br> | ||

| + | '''PEXPIREAT''' key unix-time-milliseconds [ NX | XX | GT | LT] <br> | ||

| + | numeric/string<br> | ||

| + | '''PEXPIRETIME''' key <br> | ||

| + | numeric/string<br> | ||

| + | '''PING''' [message]<br> | ||

| + | string/numeric if [message] consists of digits only, otherwise string.<br> | ||

| + | '''RANDOMKEY''' <br> | ||

| + | string, numeric/string if key consists of digits only.<br> | ||

| + | '''RENAME''' key newkey <br> | ||

| + | string (“OK”)<br> | ||

| + | '''RENAMENX''' key newkey <br> | ||

| + | numeric (1/0)<br> | ||

| + | '''RESTORE''' key ttl serialized-value [REPLACE] [ABSTTL] [IDLETIME seconds] [FREQ frequency] <br> | ||

| + | - string (“OK”)<br> | ||

| + | - Serialized value works differently in AMI because we do not have a base 32 binary string. Example usage below:<br> | ||

| + | - execute set a 1;<br> | ||

| + | - Binary b = execute dump a;<br> | ||

| + | - string s = execute RESTORE a 16597119999999 "${binaryToStr16(b)}" replace absttl;<br> | ||

| + | - string res = execute get a;<br> | ||

| + | - session.log(res);<br> | ||

| + | '''RPOP''' key [count] <br> | ||

| + | Without COUNT, numeric/string if the element is a number, otherwise string.<br> | ||

| + | With COUNT, list/string.<br> | ||

| + | '''RPUSH''' key element [element ...]<br> | ||

| + | - numeric/string<br> | ||

| + | '''RPUSHX''' key element [element ...] <br> | ||

| + | - numeric/string<br> | ||

| + | '''SADD''' key member [member ...] <br> | ||

| + | - numeric<br> | ||

| + | '''SAVE''' <br> | ||

| + | - string (“OK”)<br> | ||

| + | '''SCAN''' cursor [MATCH pattern] [COUNT count] [TYPE type] <br> | ||

| + | - list/string<br> | ||

| + | '''SCARD''' key <br> | ||

| + | - numeric/string<br> | ||

| + | '''SDIFF''' key [key ...] <br> | ||

| + | - string/list<br> | ||

| + | '''SDIFFSTORE''' destination key [key ...] <br> | ||

| + | - numeric/string<br> | ||

| + | '''SET''' key value [ NX | XX ] [GET] [ EX seconds | PX milliseconds | EXAT unix-time-seconds | PXAT unix-time-milliseconds | KEEPTTL ] <br> | ||

| + | - string (“OK”)<br> | ||

| + | '''SETBIT''' key offset value <br> | ||

| + | - numeric/string 1/0<br> | ||

| + | '''SETEX''' key seconds value<br> | ||

| + | - string (“OK”)<br> | ||

| + | '''SETNX''' key value <br> | ||

| + | - numeric (0/1)<br> | ||

| + | '''SETRANGE''' key offset value <br> | ||

| + | - numeric/string<br> | ||

| + | '''SINTER''' key [key ...] <br> | ||

| + | - set/string<br> | ||

| + | '''SINTERCARD''' numkeys key [key ...] [LIMIT limit]<br> | ||

| + | - numeric/string<br> | ||

| + | '''SINTERSTORE''' destination key [key ...] <br> | ||

| + | - numeric/string<br> | ||

| + | '''SISMEMBER''' key member <br> | ||

| + | - string (true/false)<br> | ||

| + | '''SMEMBERS''' key <br> | ||

| + | - set/string<br> | ||

| + | '''SMISMEMBER''' key member [member ...] <br> | ||

| + | - list/string<br> | ||

| + | '''SMOVE''' source destination member <br> | ||

| + | numeric<br> | ||

| + | |||

| + | '''SORT''' key [BY pattern] [LIMIT offset count] [GET pattern [GET pattern ...]] [ ASC | DESC] [ALPHA] [STORE destination] <br> | ||

| + | Without STORE, list/string.<br> | ||

| + | With STORE, numeric<br> | ||

| + | '''SORT_RO''' key [BY pattern] [LIMIT offset count] [GET pattern [GET pattern ...]] [ ASC | DESC] [ALPHA] <br> | ||

| + | list/string<br> | ||

| + | '''SPOP''' key [count]<br> | ||

| + | With COUNT, set/string.<br> | ||

| + | Without COUNT, numeric/string if the element is a number, otherwise string.<br> | ||

| + | '''SRANDMEMBER''' key [count] <br> | ||

| + | list/string<br> | ||

| + | '''SREM''' key member [member ...] <br> | ||

| + | numeric/string<br> | ||

| + | '''SSCAN''' key cursor [MATCH pattern] [COUNT count]<br> | ||

| + | list/string<br> | ||

| + | '''STRLEN''' key <br> | ||

| + | numeric/string<br> | ||

| + | '''SUBSTR''' key start end <br> | ||

| + | string<br> | ||

| + | '''SUNION''' key [key ...] <br> | ||

| + | set/string<br> | ||

| + | '''SUNIONSTORE''' destination key [key ...] <br> | ||

| + | numeric/string<br> | ||

| + | '''TIME''' <br> | ||

| + | list/string<br> | ||

| + | '''TOUCH''' key [key ...] <br> | ||

| + | numeric/string<br> | ||

| + | '''TTL''' key <br> | ||

| + | numeric/string<br> | ||

| + | '''TYPE''' key<br> | ||

| + | string<br> | ||

| + | '''UNLINK''' key <br> | ||

| + | numeric/string<br> | ||

| + | '''ZADD''' key [ NX | XX] [ GT | LT] [CH] [INCR] score member [ score member ...] <br> | ||

| + | numeric/string<br> | ||

| + | '''ZCARD''' key <br> | ||

| + | numeric/string<br> | ||

| + | '''ZCOUNT''' key min max <br> | ||

| + | numeric/string<br> | ||

| + | '''ZDIFF''' numkeys key [key ...] [WITHSCORES] <br> | ||

| + | set/string<br> | ||

| + | '''ZDIFFSTORE''' destination numkeys key [key ...] <br> | ||

| + | numeric/string<br> | ||

| + | '''ZINCRBY''' key increment member <br> | ||

| + | numeric/string<br> | ||

| + | '''ZINTER''' numkeys key [key ...] [WEIGHTS weight [weight ...]] [AGGREGATE SUM | MIN | MAX] [WITHSCORES] <br> | ||

| + | With WITHSCORE, list/string<br> | ||

| + | Without WITHSCORE, set/string<br> | ||

| + | |||

| + | '''ZINTERCARD''' numkeys key [key ...] [LIMIT limit] <br> | ||

| + | numeric/string<br> | ||

| + | '''ZINTERSTORE''' destination numkeys key [key ...] [WEIGHTS weight [weight ...]] [AGGREGATE SUM | MIN | MAX] <br> | ||

| + | numeric/string<br> | ||

| + | '''ZLEXCOUNT''' key min max <br> | ||

| + | numeric<br> | ||

| + | '''ZMPOP''' numkeys key [key ...] MIN | MAX [COUNT count] <br> | ||

| + | numeric/string<br> | ||

| + | '''ZMPOP''' numkeys key [key ...] MIN | MAX [COUNT count] <br> | ||

| + | list/string<br> | ||

| + | '''ZMSCORE''' key member [member ...] <br> | ||

| + | list/string<br> | ||

| + | '''ZPOPMAX''' key [count] <br> | ||

| + | list/string<br> | ||

| + | |||

| + | '''ZPOPMIN''' key [count] <br> | ||

| + | list/string<br> | ||

| + | '''ZRANDMEMBER''' key [ count [WITHSCORES]] <br> | ||

| + | list/string<br> | ||

| + | '''ZRANGE''' key start stop [ BYSCORE | BYLEX] [REV] [LIMIT offset count] [WITHSCORES] <br> | ||

| + | set/string<br> | ||

| + | '''ZRANGESTORE''' dst src min max [ BYSCORE | BYLEX] [REV] [LIMIT offset count] <br> | ||

| + | numeric/string<br> | ||

| + | '''ZRANK''' key member <br> | ||

| + | string/null<br> | ||

| + | '''ZREM''' key member [member ...] <br> | ||

| + | numeric/string<br> | ||

| + | '''ZREMRANGEBYLEX''' key min max <br> | ||

| + | numeric/string<br> | ||

| + | '''ZREMRANGEBYRANK''' key start stop <br> | ||

| + | numeric/string<br> | ||

| + | |||

| + | '''ZREMRANGEBYSCORE''' key min max <br> | ||

| + | numeric/string<br> | ||

| + | '''ZREVRANK''' key member<br> | ||

| + | numeric/string<br> | ||

| + | '''ZSCAN''' key cursor [MATCH pattern] [COUNT count]<br> | ||

| + | list/string<br> | ||

| + | '''ZSCORE''' key member<br> | ||

| + | numeric/string<br> | ||

| + | '''ZUNION''' numkeys key [key ...] [WEIGHTS weight [weight ...]] [AGGREGATE SUM | MIN | MAX] [WITHSCORES]<br> | ||

| + | list/string<br> | ||

| + | '''ZUNIONSTORE''' destination numkeys key [key ...] [WEIGHTS weight [weight ...]] [AGGREGATE SUM | MIN | MAX] <br> | ||

| + | numeric/string<br> | ||

Latest revision as of 15:48, 27 February 2024

Flat File Reader

Overview

The AMI Flat File Reader Datasource Adapter is a highly configurable adapter designed to process extremely large flat files into tables at rates exceeding 100mb per second*. There are a number of directives which can be used to control how the flat file reader processes a file. Each line (delineated by a Line feed) is considered independently for parsing. Note the EXECUTE <sql> clause supports the full AMI sql language.

*Using Pattern Capture technique (_pattern) to extract 3 fields across a 4.080 gb text file containing 11,999,504 records. This generated a table of 11,999,504 records x 4 columns in 37,364 milliseconds (additional column is the default linenum). Tested on raid-2 7200rpm 2TB drive

Generally speaking, the parser can handle three (4) different methods of parsing files:

Delimited list or ordered fields

Example data and query:

11232|1000|123.20

12412|8900|430.90

CREATE TABLE mytable AS USE _file="myfile.txt" _delim="|"

_fields="String account, Integer qty, Double px"

EXECUTE SELECT * FROM file

Key value pairs

Example data and query:

account=11232|quantity=1000|price=123.20

account=12412|quantity=8900|price=430.90

CREATE TABLE mytable AS USE _file="myfile.txt" _delim="|" _equals="="

_fields="String account, Integer qty, Double px"

EXECUTE SELECT * FROM file

Pattern Capture

Example data and query:

Account 11232 has 1000 shares at $123.20 px

Account 12412 has 8900 shares at $430.90 px

CREATE TABLE mytable AS USE _file="myfile.txt"

_fields="String account, Integer qty, Double px"

_pattern="account,qty,px=Account (.*) has (.*) shares at \\$(.*) px"

EXECUTE SELECT * FROM file

Raw Line

If you do not specify a _fields, _mapping nor _pattern directive then the parser defaults to a simple row-per-line parser. A "line" column is generated containing the entire contents of each line from the file

CREATE TABLE mytable AS USE _file="f.txt" EXECUTE SELECT * FROM FILE

Configuring the Adapter for first use

1. Open the datamodeler (In Developer Mode -> Menu Bar -> Dashboard -> Datamodel)

2. Choose the "Attach Datasource" button

3. Choose Flat File Reader

4. In the Add datasource dialog:

Name: Supply a user defined Name, ex: MyFiles

URL: /full/path/to/directory/containing/files (ex: /home/myuser/files )

(Keep in mind that the path is on the machine running AMI, not necessarily your local desktop)

5. Click "Add Datasource" Button

Accessing Files Remotely: You can also access files on remote machines as well using an AMI Relay. First install an AMI relay on the machine that contains the files, or at least has access to the files, you wish to read ( See AMI for the Enterprise documentation for details on how to install an AMI relay). Then in the Add Datasource wizard select the relay in the "Relay To Run On" dropdown.

General Directives

File name Directive (Required)

Syntax

_file=path/to/file

Overview

This directive controls the location of the file to read, relative to the datasource's url. Use the forward slash (/) to indicate directories (standard UNIX convention)

Examples

_file="data.txt" (Read the data.txt file, located at the root of the datasource's url)

_file="subdir/data.txt" (Read the data.txt file, found under the subdir directory)

Field definitions Directive (Required)

Syntax

_fields=col1_type col_name, col2_type col2_name, ...

Overview

This directive controls the Column names that will be returned, along with their types. The order in which they are defined is the same as the order in which they are returned. If the column type is not supplied, the default is String. Special note on additional columns: If the line number (see _linenum directive) column is not supplied in the list, it will default to type integer and be added to the end of the table schema. Columns defined in the Pattern (see _pattern directive) but not defined in _fields will be added to the end of the table schema.

Types should be one of: String, Long, Integer, Boolean, Double, Float, UTC

Column names must be valid variable names.

Examples

_fields="String account,Long quantity" (define two columns)

_fields ="fname,lname,int age" (define 3 columns, fname and lname default to String)

Directives for parsing Delimited list of ordered Fields

_file=file_name (Required, see general directives)

_fields=col1_type col1_name, ... (Required, see general directives)

_delim=delim_string (Required)

_conflateDelim=true|false (Optional. Default is false)

_quote=single_quote_char (Optional)

_escape=single_escape_char (Optional)

The _delim indicates the char (or chars) used to separate each field (If _conflateDelim is true, then 1 or more consecutive delimiters are treated as a single delimiter). The _fields is an ordered list of types and field names for each of the delimited fields. If the _quote is supplied, then a field value starting with quote will be read until another quote char is found, meaning delims within quotes will not be treated as delims. If the _escape char is supplied then when an escape char is read, it is skipped and the following char is read as a literal.

Examples

_delim="|"

_fields="code,lname,int age"

_quote="'"

_escape="\\"

This defines a pattern such that:

11232-33|Smith|20

'1332|ABC'||30

Account\|112|Jones|18

Maps to:

| code | lname | age |

|---|---|---|

| 11232-33 | Smith | 20 |

| 1332|ABC | 30 | |

| Account|112 | Jones | 18 |

Directives for parsing Key Value Pairs

_file=file_name (Required, see general directives)

_fields=col1_type col1_name, ... (Required, see general directives)

_delim=delim_string (Required)

_conflateDelim=true|false (Optional. Default is false)

_equals=single_equals_char (Required)

_mappings=from1=to1,from2=to2,... (Optional)

_quote=single_quote_char (Optional)

_escape=single_escape_char (Optional)

The _delim indicates the char (or chars) used to separate each field (If _conflateDelim is true, then 1 or more consecutive delimiters are treated as a single delimiter). The _equals char is used to indicate the key/value separator. The _fields is an ordered list of types and field names for each of the delimited fields. If the _quote is supplied, then a field value starting with quote will be read until another quote char is found, meaning delims within quotes will not be treated as delims. If the _escape char is supplied then when an escape char is read, it is skipped and the following char is read as a literal.

The optional _mappings directive allows you to map keys within the flat file to file names specified in the _fields directive. This is useful when a file has key names that are not valid field names, or a file has multiple key names that should be used to populate the same column.

Examples

_delim="|"

_equals="="

_fields="code,lname,int age"

_mappings="20=code,21=lname,22=age"

_quote="'"

_escape="\\"

This defines a pattern such that:

code=11232-33|lname=Smith|age=20

code='1332|ABC'|age=30

20=Act\|112|21=J|22=18 (Note: this row will work due to the _mappings directive)

Maps to:

| code | lname | age |

|---|---|---|

| 11232-33 | Smith | 20 |

| 1332|ABC | 30 | |

| Act|112 | J | 18 |

Directives for Pattern Capture

_file=file_name (Required, see general directives)

_fields=col1_type col1_name, ... (Optional, see general directives)

_pattern=col1_type col1_name, ...=regex_with_grouping (Required)

The _pattern must start with a list of column names, followed by an equal sign (=) and then a regular expression with grouping (this is dubbed a column-to-pattern mapping). The regular expression's first grouping value will be mapped to the first column, 2nd grouping to the second and so on.

If a column is already defined in the _fields directive, then it's preferred to not include the column type in the _pattern definition.

For multiple column-to-pattern mappings, use the \n (new line) to separate each one.

Examples

_pattern="fname,lname,int age=User (.*) (.*) is (.*) years old"

This defines a pattern such that:

User John Smith is 20 years old

User Bobby Boy is 30 years old

Maps to:

| fname | lname | age |

|---|---|---|

| John | Smith | 20 |

| Bobby | Boy | 30 |

_pattern="fname,lname,int age=User (.*) (.*) is (.*) years old\n lname,fname,int weight=Customer (.*),(.*) weighs (.*) pounds"

This defines two patterns such that:

User John Smith is 20 years old

User Boy,Bobby weighs 130 pounds'

Maps to:

| fname | lname | age | weight |

|---|---|---|---|

| John | Smith | 20 | |

| Bobby | Boy | 130 |

Optional Line Number Directives

Skipping Lines Directive (optional)

Syntax

_skipLines=number_of_lines

Overview

This directive controls the number of lines to skip from the top of the file. This is useful for ignoring "junk" at the top of a file. If not supplied, then no lines are skipped. From a performance standpoint, skipping lines is highly efficient.

Examples

_skipLines="0" (this is the default, don't skip any lines)

_skipLines="1" (skip the first line, for example if there is a header)

Line Number Column Directive (optional)

Syntax

_linenum=column_name

Overview

This directive controls the name of the column that will contain the line number. If not supplied, the default is "linenum". Notes about the line number: The first line is line number 1, and skipped/filtered out lines are still considered in numbering. For example, if the _skipLines=2 , then the first line will have a line number of 3.

Examples

_linenum="" (A line number column is not included in the table)

_linenum="linenum" (The column linenum will contain line numbers, this is the default)

_linenum="rownum" (The column rownum will contain line numbers)

Optional Line Filtering Directives

Filtering Out Lines Directive (optional)

Syntax

_filterOut=regex

Overview

Any line that matches the supplied regular expression will be ignored. If not supplied, then no lines are filtered out. From a Performance standpoint, this is applied before other parsing is considered, so ignoring lines using a filter out directive is faster, as opposed to using a WHERE clause, for example.

Examples

_filterOut="Test" (ignore any lines containing the text Test)

_filterOut="^Comment" (ignore any lines starting with Comment)

_filterOut="This|That" (ignore any lines containing the text This or That)

Filtering In Lines Directive (optional)

Syntax

_filterIn=regex

Overview

Only lines that match the supplied regular expression will be considered. If not supplied, then all lines are considered. From a Performance standpoint, this is applied before other parsing is considered, so narrowing down the lines considered using a filter in directive is faster, as opposed to using a WHERE clause, for example. If you use a grouping (..) inside the regular expression, then only the contents of the first grouping will be considered for parsing

Examples

_filterIn="3Forge" (ignore any lines that don't contain the word 3Forge)

_filterIn="^Outgoing" (ignore any lines that don't start with Outgoing)

_filterIn="Data(.*)" (ignore any lines that don't start with Data, and only consider the text after the word Data for processing)

Python Adapter Guide

1. Introduction

The python adapter is a library which provides access to both the console port as well as real-time port on python scripts via sockets.

The adapter is meant to be integrated with external python libraries and does not contain a __main__ entry point. To use the simple python demo, switch your branch to example and run demo.py.

The adapter has a few default arguments which should work with AMI out of the box but can be customized depending on the input arguments. To view the full set of arguments, run the program with the --help argument.

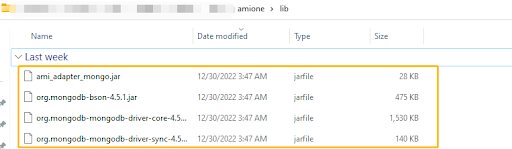

MongoDB adapter

1. Setup

(a). Go to your lib directory (located at ./amione/lib/) and take the ami_adapter_mongo.9593.dev.obv.tar.gz and copy the contents into the lib directory of your currently installed AMI. Make sure that you unzip the file package into multiple files ending with .jar.

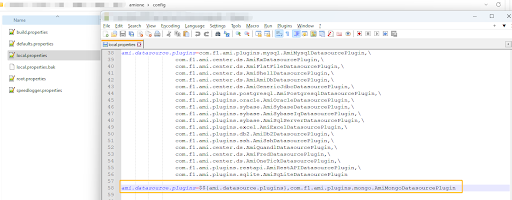

(b). Go into your config directory (located at ami\amione\config) and edit or make a local.properties

Search for ami.datasource.plugins, add the Mongo Plugin to the list of datasource plugins:

ami.datasource.plugins=$${ami.datasource.plugins},com.f1.ami.plugins.mongo.AmiMongoDatasourcePlugin

Here is an example of what it might look like:

Note: $${ami.datasource.plugins} references the existing plugin list. Do not put a space before or after the comma.

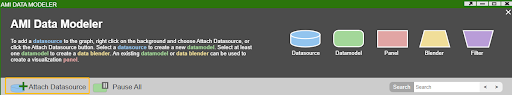

(c). Restart AMI

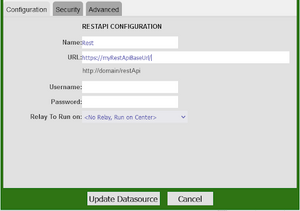

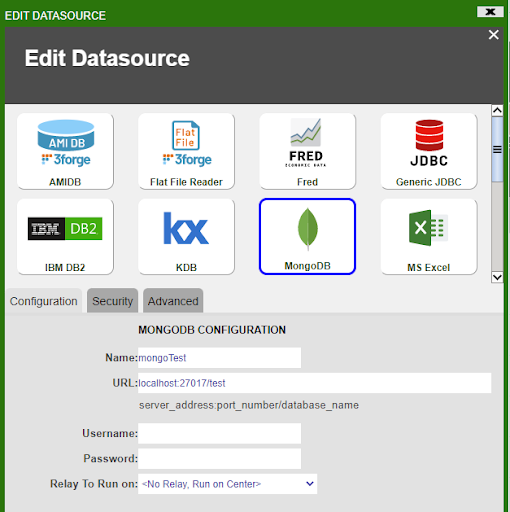

(d). Go to Dashboard->Data modeller and select Attach Datasource.

(e). Select MongoDB as the Datasource. Give your Datasource a name and configure the URL.

The URL should take the following format:

URL: server_address:port_number/Your_Database_Name

In the demonstration below, the URL is: localhost:27017/test. The mongoDB by default listens to the port 27017 and we are going to the test database.

2. Send queries to MongoDB in AMI

The AMI MongoDB adapter allows you to query a MongoDB datasource and output sql tables.

This section will demonstrate how to query MongoDB in AMI. The general syntax for querying MongoDB is:

CREATE TABLE Your_Table_Name AS USE EXECUTE <Your_MongoDB_query>

Note that whatever comes after the keyword EXECUTE is the MongoDB query, which should follow the MongoDB query syntax.

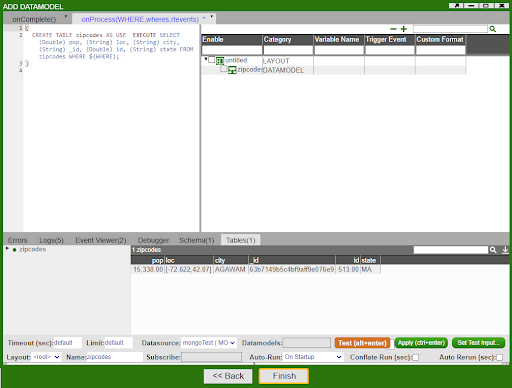

(a). Create a AMI SQL table from a MongoDB collection

(i). MongoDB collection example1

In the MongoDB shell, let’s create a collection called “customer”, and insert some rows into it.

db.createCollection("zipcodes");

db.zipcodes.insert({id:01001,city:'AGAWAM', loc:[-72.622,42.070],pop:15338, state:'MA'});

(ii).Query this table in AMI

CREATE TABLE zips AS USE EXECUTE SELECT (String)_id,(String)city,(String)loc,(Integer)pop,(String)state FROM zipcodes WHERE ${WHERE};

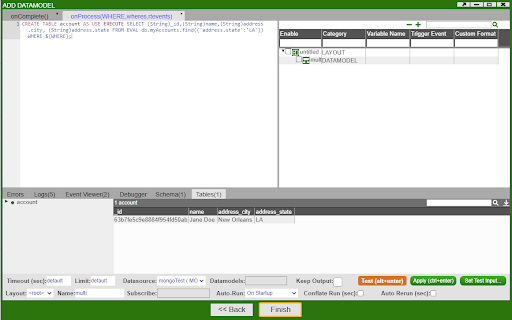

(b).Create a AMI SQL table from a MongoDB collection with nested columns

(i). Inside the MongoDB shell, we can create a collection named "myAccounts" and insert one row into the collection.

db.createCollection("myAccounts");

db.myAccounts.insert({id:1,name:'John Doe', address:{city:'New York City', state:'New York'}});

(ii).Query this table in AMI

CREATE TABLE account AS USE EXECUTE SELECT (String)_id,(String)name,(String)address.city, (String)address.state FROM myAccounts WHERE ${WHERE};

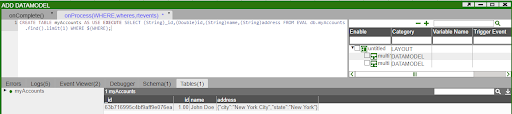

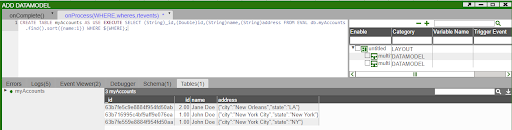

(c). Create a AMI SQL table from a MongoDB collection using EVAL methods

(i). Find

Let’s use the myAccounts MongoDB collection that we created before and insert some rows into it. Inside MongoDB shell:

db.myAccounts.insert({id:1,name:'John Doe', address:{city:'New York City', state:'NY'}});

db.myAccounts.insert({id:2,name:'Jane Doe', address:{city:'New Orleans', state:'LA'}});

If we want to create a sql table from MongoDB that finds all rows whose address state is LA, we can enter the following command in AMI script and hits test:

CREATE TABLE account AS USE EXECUTE SELECT (String)_id,(String)name,(String)address.city, (String)address.state FROM EVAL db.myAccounts.find({'address.state':'LA'}) WHERE ${WHERE};

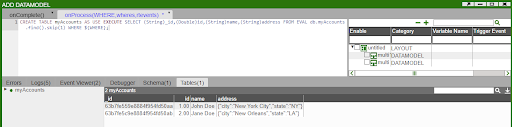

(ii). Limit

CREATE TABLE myAccounts AS USE EXECUTE SELECT (String)_id,(Double)id,(String)name,(String)address FROM EVAL db.myAccounts.find().limit(1) WHERE ${WHERE};

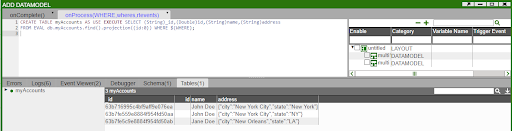

(iii). Skip

CREATE TABLE myAccounts AS USE EXECUTE SELECT (String)_id,(Double)id,(String)name,(String)address FROM EVAL db.myAccounts.find().skip(1) WHERE ${WHERE};

(iv). Sort

CREATE TABLE myAccounts AS USE EXECUTE SELECT (String)_id,(Double)id,(String)name,(String)address FROM EVAL db.myAccounts.find().sort({name:1}) WHERE ${WHERE};

(v). Projection

CREATE TABLE myAccounts AS USE EXECUTE SELECT (String)_id,(Double)id,(String)name,(String)address FROM EVAL db.myAccounts.find().projection({id:0}) WHERE ${WHERE};

Shell Command Reader

Overview

The AMI Shell Command Datasource Adapter is a highly configurable adapter designed to execute shell commands and capture the stdout, stderr and exitcode. There are a number of directives which can be used to control how the command is executed, including setting environment variables and supplying data to be passed to stdin. The adapter processes the output from the command. Each line (delineated by a Line feed) is considered independently for parsing. Note the EXECUTE <sql> clause supports the full AMI sql language.

Please note, that running the command will produce 3 tables:

- Stdout - Contains the contents of standard out

- Stderr - Contains the contents from standard err

- exitCode - Contains the executed code of the process

(You can limit which tables are returned using the _include directive)

Generally speaking, the parser can handle four (4) different methods of parsing:

Delimited list or ordered fields

Example data and query:

11232|1000|123.20

12412|8900|430.90

CREATE TABLE mytable AS USE _cmd="my_cmd" _delim="|"

_fields="String account, Integer qty, Double px"

EXECUTE SELECT * FROM cmd

Key value pairs

Example data and query:

account=11232|quantity=1000|price=123.20

account=12412|quantity=8900|price=430.90

CREATE TABLE mytable AS USE _cmd="my_cmd" _delim="|" _equals="="

_fields="String account, Integer qty, Double px"

EXECUTE SELECT * FROM cmd

Pattern Capture

Example data and query:

Account 11232 has 1000 shares at $123.20 px

Account 12412 has 8900 shares at $430.90 px

CREATE TABLE mytable AS USE _cmd="my_cmd"

_fields="String account, Integer qty, Double px"

_pattern="account,qty,px=Account (.*) has (.*) shares at \\$(.*) px"

EXECUTE SELECT * FROM cmd

Raw Line

If you do not specify a _fields, _mapping nor _pattern directive then the parser defaults to a simple row-per-line parser. A "line" column is generated containing the entire contents of each line from the command's output

CREATE TABLE mytable AS USE _cmd="my_cmd" EXECUTE SELECT * FROM cmd

Configuring the Adapter for first use

1. Open the datamodeler (In Developer Mode -> Menu Bar -> Dashboard -> Datamodel)

2. Choose the "Add Datasource" button

3. Choose Shell Command Reader

4. In the Add datasource dialog:

Name: Supply a user defined Name, ex: MyShell

URL: /full/path/to/path/of/working/directory (ex: /home/myuser/files )

(Keep in mind that the path is on the machine running AMI, not necessarily your local desktop)

5. Click "Add Datasource" Button

Running Commands Remotely: You can execute commands on remote machines as well using an AMI Relay. First install an AMI relay on the machine that the command should be executed on ( See AMI for the Enterprise documentation for details on how to install an AMI relay). Then in the Add Datasource wizard select the relay in the "Relay To Run On" dropdown.

General Directives

Command Directive (Required)

Syntax

_cmd="command to run"

Overview

This directive controls the command to execute.

Examples

_cmd="ls -lrt" (execute ls -lrt)

_cmd="ls | sort" (execute ls and pipe that into sort)

_cmd="dir /s" (execute dir on a windows system)

Supplying Standard Input (Optional)

Syntax

_stdin="text to pipe into stdin"

Overview

This directive is used to control what data is piped into the standard in (stdin) of the process to run.

Example

_cmd="cat > out.txt" _stdin="hello world" (will pipe "hello world" into out.txt)

Controlling what is captured from the Process (Optional)

Syntax

_capture="comma_delimited_list" (default is stdout,stderr,exitCode)

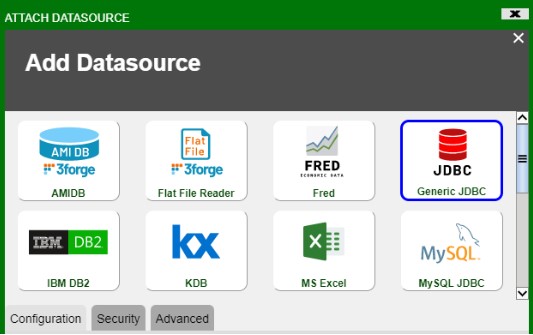

Overview